mirror of

https://github.com/QwenLM/qwen-code.git

synced 2026-01-19 23:36:19 +00:00

Compare commits

18 Commits

fix/acp-se

...

feature/ad

| Author | SHA1 | Date | |

|---|---|---|---|

|

|

88bd8ddffd | ||

|

|

af269e6474 | ||

|

|

ef769c49bf | ||

|

|

ec2aa6d86d | ||

|

|

a684f07ff4 | ||

|

|

66ad936c31 | ||

|

|

8b5f198e3c | ||

|

|

79cce84280 | ||

|

|

b9207c5884 | ||

|

|

baf848a4d9 | ||

|

|

d0104dc487 | ||

|

|

531062aeaf | ||

|

|

2852f48a4a | ||

|

|

04a11aa111 | ||

|

|

d5ad3aebe4 | ||

|

|

98c680642f | ||

|

|

e4efd3a15d | ||

|

|

b923acd278 |

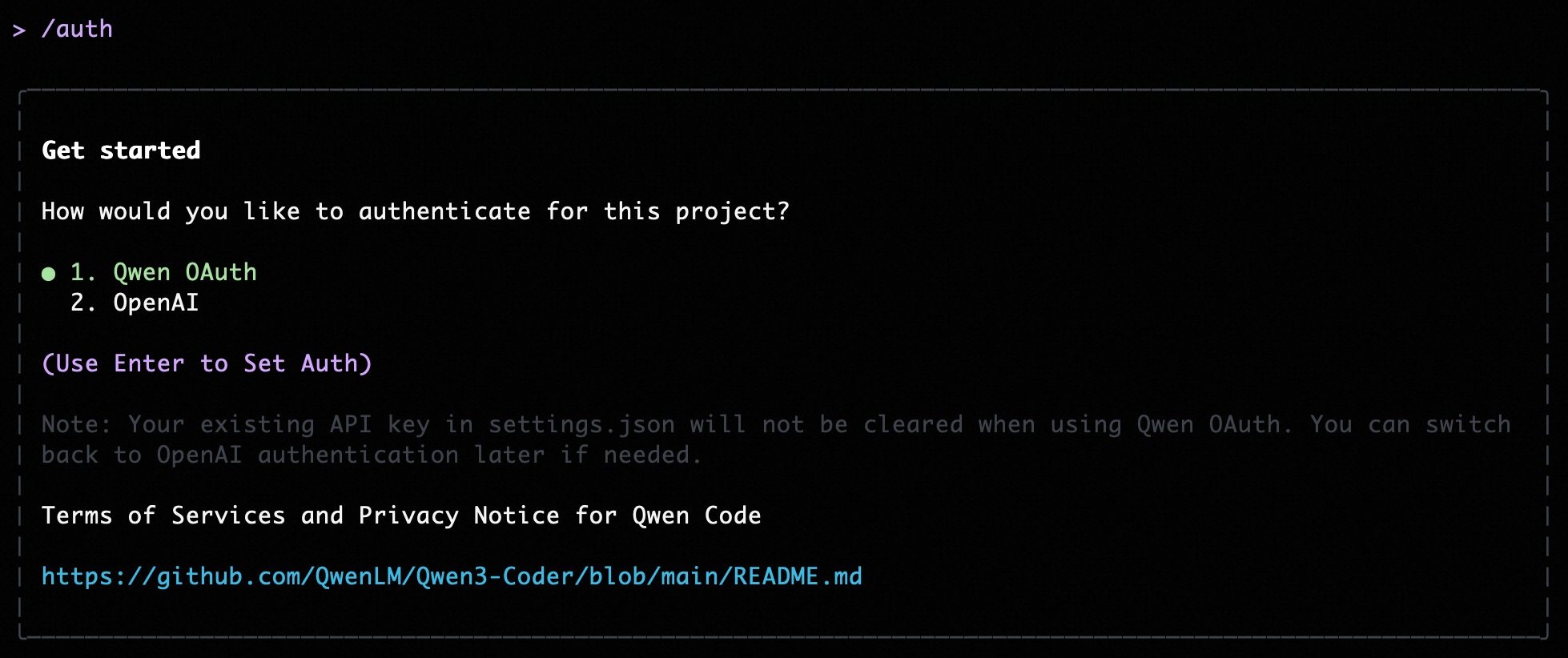

@@ -5,11 +5,13 @@ Qwen Code supports two authentication methods. Pick the one that matches how you

|

||||

- **Qwen OAuth (recommended)**: sign in with your `qwen.ai` account in a browser.

|

||||

- **OpenAI-compatible API**: use an API key (OpenAI or any OpenAI-compatible provider / endpoint).

|

||||

|

||||

|

||||

|

||||

## Option 1: Qwen OAuth (recommended & free) 👍

|

||||

|

||||

Use this if you want the simplest setup and you’re using Qwen models.

|

||||

Use this if you want the simplest setup and you're using Qwen models.

|

||||

|

||||

- **How it works**: on first start, Qwen Code opens a browser login page. After you finish, credentials are cached locally so you usually won’t need to log in again.

|

||||

- **How it works**: on first start, Qwen Code opens a browser login page. After you finish, credentials are cached locally so you usually won't need to log in again.

|

||||

- **Requirements**: a `qwen.ai` account + internet access (at least for the first login).

|

||||

- **Benefits**: no API key management, automatic credential refresh.

|

||||

- **Cost & quota**: free, with a quota of **60 requests/minute** and **2,000 requests/day**.

|

||||

@@ -24,15 +26,54 @@ qwen

|

||||

|

||||

Use this if you want to use OpenAI models or any provider that exposes an OpenAI-compatible API (e.g. OpenAI, Azure OpenAI, OpenRouter, ModelScope, Alibaba Cloud Bailian, or a self-hosted compatible endpoint).

|

||||

|

||||

### Quick start (interactive, recommended for local use)

|

||||

### Recommended: Coding Plan (subscription-based) 🚀

|

||||

|

||||

When you choose the OpenAI-compatible option in the CLI, it will prompt you for:

|

||||

Use this if you want predictable costs with higher usage quotas for the qwen3-coder-plus model.

|

||||

|

||||

- **API key**

|

||||

- **Base URL** (default: `https://api.openai.com/v1`)

|

||||

- **Model** (default: `gpt-4o`)

|

||||

> [!IMPORTANT]

|

||||

>

|

||||

> Coding Plan is only available for users in China mainland (Beijing region).

|

||||

|

||||

> **Note:** the CLI may display the key in plain text for verification. Make sure your terminal is not being recorded or shared.

|

||||

- **How it works**: subscribe to the Coding Plan with a fixed monthly fee, then configure Qwen Code to use the dedicated endpoint and your subscription API key.

|

||||

- **Requirements**: an active Coding Plan subscription from [Alibaba Cloud Bailian](https://bailian.console.aliyun.com/cn-beijing/?tab=globalset#/efm/coding_plan).

|

||||

- **Benefits**: higher usage quotas, predictable monthly costs, access to latest qwen3-coder-plus model.

|

||||

- **Cost & quota**: varies by plan (see table below).

|

||||

|

||||

#### Coding Plan Pricing & Quotas

|

||||

|

||||

| Feature | Lite Basic Plan | Pro Advanced Plan |

|

||||

| :------------------ | :-------------------- | :-------------------- |

|

||||

| **Price** | ¥40/month | ¥200/month |

|

||||

| **5-Hour Limit** | Up to 1,200 requests | Up to 6,000 requests |

|

||||

| **Weekly Limit** | Up to 9,000 requests | Up to 45,000 requests |

|

||||

| **Monthly Limit** | Up to 18,000 requests | Up to 90,000 requests |

|

||||

| **Supported Model** | qwen3-coder-plus | qwen3-coder-plus |

|

||||

|

||||

#### Quick Setup for Coding Plan

|

||||

|

||||

When you select the OpenAI-compatible option in the CLI, enter these values:

|

||||

|

||||

- **API key**: `sk-sp-xxxxx`

|

||||

- **Base URL**: `https://coding.dashscope.aliyuncs.com/v1`

|

||||

- **Model**: `qwen3-coder-plus`

|

||||

|

||||

> **Note**: Coding Plan API keys have the format `sk-sp-xxxxx`, which is different from standard Alibaba Cloud API keys.

|

||||

|

||||

#### Configure via Environment Variables

|

||||

|

||||

Set these environment variables to use Coding Plan:

|

||||

|

||||

```bash

|

||||

export OPENAI_API_KEY="your-coding-plan-api-key" # Format: sk-sp-xxxxx

|

||||

export OPENAI_BASE_URL="https://coding.dashscope.aliyuncs.com/v1"

|

||||

export OPENAI_MODEL="qwen3-coder-plus"

|

||||

```

|

||||

|

||||

For more details about Coding Plan, including subscription options and troubleshooting, see the [full Coding Plan documentation](https://bailian.console.aliyun.com/cn-beijing/?tab=doc#/doc/?type=model&url=3005961).

|

||||

|

||||

### Other OpenAI-compatible Providers

|

||||

|

||||

If you are using other providers (OpenAI, Azure, local LLMs, etc.), use the following configuration methods.

|

||||

|

||||

### Configure via command-line arguments

|

||||

|

||||

|

||||

@@ -120,6 +120,7 @@ Settings are organized into categories. All settings should be placed within the

|

||||

"generationConfig": {

|

||||

"timeout": 60000,

|

||||

"disableCacheControl": false,

|

||||

"contextWindowSize": 128000,

|

||||

"customHeaders": {

|

||||

"X-Request-ID": "req-123",

|

||||

"X-User-ID": "user-456"

|

||||

@@ -134,6 +135,46 @@ Settings are organized into categories. All settings should be placed within the

|

||||

}

|

||||

```

|

||||

|

||||

**contextWindowSize:**

|

||||

|

||||

The `contextWindowSize` field allows you to manually override the automatic context window size detection. This is useful when you want to:

|

||||

|

||||

- **Optimize performance**: Limit context size to improve response speed

|

||||

- **Control costs**: Reduce token usage to lower API call costs

|

||||

- **Handle specific requirements**: Set a custom limit when automatic detection doesn't match your needs

|

||||

- **Testing scenarios**: Use smaller context windows in test environments

|

||||

|

||||

**Values:**

|

||||

|

||||

- `-1` (default): Use automatic detection based on the model's capabilities

|

||||

- Positive number: Manually specify the context window size in tokens (e.g., `128000` for 128k tokens)

|

||||

|

||||

**Example with contextWindowSize:**

|

||||

|

||||

```json

|

||||

{

|

||||

"model": {

|

||||

"generationConfig": {

|

||||

"contextWindowSize": 128000 // Override to 128k tokens

|

||||

}

|

||||

}

|

||||

}

|

||||

```

|

||||

|

||||

Or use `-1` for automatic detection:

|

||||

|

||||

```json

|

||||

{

|

||||

"model": {

|

||||

"generationConfig": {

|

||||

"contextWindowSize": -1 // Auto-detect based on model (default)

|

||||

}

|

||||

}

|

||||

}

|

||||

```

|

||||

|

||||

**Priority:** User-configured `contextWindowSize` > Automatic detection > Default value

|

||||

|

||||

The `customHeaders` field allows you to add custom HTTP headers to all API requests. This is useful for request tracing, monitoring, API gateway routing, or when different models require different headers. If `customHeaders` is defined in `modelProviders[].generationConfig.customHeaders`, it will be used directly; otherwise, headers from `model.generationConfig.customHeaders` will be used. No merging occurs between the two levels.

|

||||

|

||||

**model.openAILoggingDir examples:**

|

||||

@@ -241,7 +282,6 @@ Per-field precedence for `generationConfig`:

|

||||

| ------------------------------------------------- | -------------------------- | --------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------- | ----------- |

|

||||

| `context.fileName` | string or array of strings | The name of the context file(s). | `undefined` |

|

||||

| `context.importFormat` | string | The format to use when importing memory. | `undefined` |

|

||||

| `context.discoveryMaxDirs` | number | Maximum number of directories to search for memory. | `200` |

|

||||

| `context.includeDirectories` | array | Additional directories to include in the workspace context. Specifies an array of additional absolute or relative paths to include in the workspace context. Missing directories will be skipped with a warning by default. Paths can use `~` to refer to the user's home directory. This setting can be combined with the `--include-directories` command-line flag. | `[]` |

|

||||

| `context.loadFromIncludeDirectories` | boolean | Controls the behavior of the `/memory refresh` command. If set to `true`, `QWEN.md` files should be loaded from all directories that are added. If set to `false`, `QWEN.md` should only be loaded from the current directory. | `false` |

|

||||

| `context.fileFiltering.respectGitIgnore` | boolean | Respect .gitignore files when searching. | `true` |

|

||||

@@ -275,7 +315,7 @@ If you are experiencing performance issues with file searching (e.g., with `@` c

|

||||

| `tools.truncateToolOutputThreshold` | number | Truncate tool output if it is larger than this many characters. Applies to Shell, Grep, Glob, ReadFile and ReadManyFiles tools. | `25000` | Requires restart: Yes |

|

||||

| `tools.truncateToolOutputLines` | number | Maximum lines or entries kept when truncating tool output. Applies to Shell, Grep, Glob, ReadFile and ReadManyFiles tools. | `1000` | Requires restart: Yes |

|

||||

| `tools.autoAccept` | boolean | Controls whether the CLI automatically accepts and executes tool calls that are considered safe (e.g., read-only operations) without explicit user confirmation. If set to `true`, the CLI will bypass the confirmation prompt for tools deemed safe. | `false` | |

|

||||

| `tools.experimental.skills` | boolean | Enable experimental Agent Skills feature | `false` | |

|

||||

| `tools.experimental.skills` | boolean | Enable experimental Agent Skills feature | `false` | |

|

||||

|

||||

#### mcp

|

||||

|

||||

@@ -530,16 +570,13 @@ Here's a conceptual example of what a context file at the root of a TypeScript p

|

||||

|

||||

This example demonstrates how you can provide general project context, specific coding conventions, and even notes about particular files or components. The more relevant and precise your context files are, the better the AI can assist you. Project-specific context files are highly encouraged to establish conventions and context.

|

||||

|

||||

- **Hierarchical Loading and Precedence:** The CLI implements a sophisticated hierarchical memory system by loading context files (e.g., `QWEN.md`) from several locations. Content from files lower in this list (more specific) typically overrides or supplements content from files higher up (more general). The exact concatenation order and final context can be inspected using the `/memory show` command. The typical loading order is:

|

||||

- **Hierarchical Loading and Precedence:** The CLI implements a hierarchical memory system by loading context files (e.g., `QWEN.md`) from several locations. Content from files lower in this list (more specific) typically overrides or supplements content from files higher up (more general). The exact concatenation order and final context can be inspected using the `/memory show` command. The typical loading order is:

|

||||

1. **Global Context File:**

|

||||

- Location: `~/.qwen/<configured-context-filename>` (e.g., `~/.qwen/QWEN.md` in your user home directory).

|

||||

- Scope: Provides default instructions for all your projects.

|

||||

2. **Project Root & Ancestors Context Files:**

|

||||

- Location: The CLI searches for the configured context file in the current working directory and then in each parent directory up to either the project root (identified by a `.git` folder) or your home directory.

|

||||

- Scope: Provides context relevant to the entire project or a significant portion of it.

|

||||

3. **Sub-directory Context Files (Contextual/Local):**

|

||||

- Location: The CLI also scans for the configured context file in subdirectories _below_ the current working directory (respecting common ignore patterns like `node_modules`, `.git`, etc.). The breadth of this search is limited to 200 directories by default, but can be configured with the `context.discoveryMaxDirs` setting in your `settings.json` file.

|

||||

- Scope: Allows for highly specific instructions relevant to a particular component, module, or subsection of your project.

|

||||

- **Concatenation & UI Indication:** The contents of all found context files are concatenated (with separators indicating their origin and path) and provided as part of the system prompt. The CLI footer displays the count of loaded context files, giving you a quick visual cue about the active instructional context.

|

||||

- **Importing Content:** You can modularize your context files by importing other Markdown files using the `@path/to/file.md` syntax. For more details, see the [Memory Import Processor documentation](../configuration/memory).

|

||||

- **Commands for Memory Management:**

|

||||

|

||||

@@ -311,9 +311,9 @@ function setupAcpTest(

|

||||

}

|

||||

});

|

||||

|

||||

it('returns modes on initialize and allows setting mode and model', async () => {

|

||||

it('returns modes on initialize and allows setting approval mode', async () => {

|

||||

const rig = new TestRig();

|

||||

rig.setup('acp mode and model');

|

||||

rig.setup('acp approval mode');

|

||||

|

||||

const { sendRequest, cleanup, stderr } = setupAcpTest(rig);

|

||||

|

||||

@@ -366,14 +366,8 @@ function setupAcpTest(

|

||||

const newSession = (await sendRequest('session/new', {

|

||||

cwd: rig.testDir!,

|

||||

mcpServers: [],

|

||||

})) as {

|

||||

sessionId: string;

|

||||

models: {

|

||||

availableModels: Array<{ modelId: string }>;

|

||||

};

|

||||

};

|

||||

})) as { sessionId: string };

|

||||

expect(newSession.sessionId).toBeTruthy();

|

||||

expect(newSession.models.availableModels.length).toBeGreaterThan(0);

|

||||

|

||||

// Test 4: Set approval mode to 'yolo'

|

||||

const setModeResult = (await sendRequest('session/set_mode', {

|

||||

@@ -398,15 +392,6 @@ function setupAcpTest(

|

||||

})) as { modeId: string };

|

||||

expect(setModeResult3).toBeDefined();

|

||||

expect(setModeResult3.modeId).toBe('default');

|

||||

|

||||

// Test 7: Set model using first available model

|

||||

const firstModel = newSession.models.availableModels[0];

|

||||

const setModelResult = (await sendRequest('session/set_model', {

|

||||

sessionId: newSession.sessionId,

|

||||

modelId: firstModel.modelId,

|

||||

})) as { modelId: string };

|

||||

expect(setModelResult).toBeDefined();

|

||||

expect(setModelResult.modelId).toBeTruthy();

|

||||

} catch (e) {

|

||||

if (stderr.length) {

|

||||

console.error('Agent stderr:', stderr.join(''));

|

||||

|

||||

@@ -70,13 +70,6 @@ export class AgentSideConnection implements Client {

|

||||

const validatedParams = schema.setModeRequestSchema.parse(params);

|

||||

return agent.setMode(validatedParams);

|

||||

}

|

||||

case schema.AGENT_METHODS.session_set_model: {

|

||||

if (!agent.setModel) {

|

||||

throw RequestError.methodNotFound();

|

||||

}

|

||||

const validatedParams = schema.setModelRequestSchema.parse(params);

|

||||

return agent.setModel(validatedParams);

|

||||

}

|

||||

default:

|

||||

throw RequestError.methodNotFound(method);

|

||||

}

|

||||

@@ -415,5 +408,4 @@ export interface Agent {

|

||||

prompt(params: schema.PromptRequest): Promise<schema.PromptResponse>;

|

||||

cancel(params: schema.CancelNotification): Promise<void>;

|

||||

setMode?(params: schema.SetModeRequest): Promise<schema.SetModeResponse>;

|

||||

setModel?(params: schema.SetModelRequest): Promise<schema.SetModelResponse>;

|

||||

}

|

||||

|

||||

@@ -165,11 +165,30 @@ class GeminiAgent {

|

||||

this.setupFileSystem(config);

|

||||

|

||||

const session = await this.createAndStoreSession(config);

|

||||

const availableModels = this.buildAvailableModels(config);

|

||||

const configuredModel = (

|

||||

config.getModel() ||

|

||||

this.config.getModel() ||

|

||||

''

|

||||

).trim();

|

||||

const modelId = configuredModel || 'default';

|

||||

const modelName = configuredModel || modelId;

|

||||

|

||||

return {

|

||||

sessionId: session.getId(),

|

||||

models: availableModels,

|

||||

models: {

|

||||

currentModelId: modelId,

|

||||

availableModels: [

|

||||

{

|

||||

modelId,

|

||||

name: modelName,

|

||||

description: null,

|

||||

_meta: {

|

||||

contextLimit: tokenLimit(modelId),

|

||||

},

|

||||

},

|

||||

],

|

||||

_meta: null,

|

||||

},

|

||||

};

|

||||

}

|

||||

|

||||

@@ -286,29 +305,15 @@ class GeminiAgent {

|

||||

async setMode(params: acp.SetModeRequest): Promise<acp.SetModeResponse> {

|

||||

const session = this.sessions.get(params.sessionId);

|

||||

if (!session) {

|

||||

throw acp.RequestError.invalidParams(

|

||||

`Session not found for id: ${params.sessionId}`,

|

||||

);

|

||||

throw new Error(`Session not found: ${params.sessionId}`);

|

||||

}

|

||||

return session.setMode(params);

|

||||

}

|

||||

|

||||

async setModel(params: acp.SetModelRequest): Promise<acp.SetModelResponse> {

|

||||

const session = this.sessions.get(params.sessionId);

|

||||

if (!session) {

|

||||

throw acp.RequestError.invalidParams(

|

||||

`Session not found for id: ${params.sessionId}`,

|

||||

);

|

||||

}

|

||||

return session.setModel(params);

|

||||

}

|

||||

|

||||

private async ensureAuthenticated(config: Config): Promise<void> {

|

||||

const selectedType = this.settings.merged.security?.auth?.selectedType;

|

||||

if (!selectedType) {

|

||||

throw acp.RequestError.authRequired(

|

||||

'Use Qwen Code CLI to authenticate first.',

|

||||

);

|

||||

throw acp.RequestError.authRequired('No Selected Type');

|

||||

}

|

||||

|

||||

try {

|

||||

@@ -377,43 +382,4 @@ class GeminiAgent {

|

||||

|

||||

return session;

|

||||

}

|

||||

|

||||

private buildAvailableModels(

|

||||

config: Config,

|

||||

): acp.NewSessionResponse['models'] {

|

||||

const currentModelId = (

|

||||

config.getModel() ||

|

||||

this.config.getModel() ||

|

||||

''

|

||||

).trim();

|

||||

const availableModels = config.getAvailableModels();

|

||||

|

||||

const mappedAvailableModels = availableModels.map((model) => ({

|

||||

modelId: model.id,

|

||||

name: model.label,

|

||||

description: model.description ?? null,

|

||||

_meta: {

|

||||

contextLimit: tokenLimit(model.id),

|

||||

},

|

||||

}));

|

||||

|

||||

if (

|

||||

currentModelId &&

|

||||

!mappedAvailableModels.some((model) => model.modelId === currentModelId)

|

||||

) {

|

||||

mappedAvailableModels.unshift({

|

||||

modelId: currentModelId,

|

||||

name: currentModelId,

|

||||

description: null,

|

||||

_meta: {

|

||||

contextLimit: tokenLimit(currentModelId),

|

||||

},

|

||||

});

|

||||

}

|

||||

|

||||

return {

|

||||

currentModelId,

|

||||

availableModels: mappedAvailableModels,

|

||||

};

|

||||

}

|

||||

}

|

||||

|

||||

@@ -15,7 +15,6 @@ export const AGENT_METHODS = {

|

||||

session_prompt: 'session/prompt',

|

||||

session_list: 'session/list',

|

||||

session_set_mode: 'session/set_mode',

|

||||

session_set_model: 'session/set_model',

|

||||

};

|

||||

|

||||

export const CLIENT_METHODS = {

|

||||

@@ -267,18 +266,6 @@ export const modelInfoSchema = z.object({

|

||||

name: z.string(),

|

||||

});

|

||||

|

||||

export const setModelRequestSchema = z.object({

|

||||

sessionId: z.string(),

|

||||

modelId: z.string(),

|

||||

});

|

||||

|

||||

export const setModelResponseSchema = z.object({

|

||||

modelId: z.string(),

|

||||

});

|

||||

|

||||

export type SetModelRequest = z.infer<typeof setModelRequestSchema>;

|

||||

export type SetModelResponse = z.infer<typeof setModelResponseSchema>;

|

||||

|

||||

export const sessionModelStateSchema = z.object({

|

||||

_meta: acpMetaSchema,

|

||||

availableModels: z.array(modelInfoSchema),

|

||||

@@ -605,7 +592,6 @@ export const agentResponseSchema = z.union([

|

||||

promptResponseSchema,

|

||||

listSessionsResponseSchema,

|

||||

setModeResponseSchema,

|

||||

setModelResponseSchema,

|

||||

]);

|

||||

|

||||

export const requestPermissionRequestSchema = z.object({

|

||||

@@ -638,7 +624,6 @@ export const agentRequestSchema = z.union([

|

||||

promptRequestSchema,

|

||||

listSessionsRequestSchema,

|

||||

setModeRequestSchema,

|

||||

setModelRequestSchema,

|

||||

]);

|

||||

|

||||

export const agentNotificationSchema = sessionNotificationSchema;

|

||||

|

||||

@@ -1,174 +0,0 @@

|

||||

/**

|

||||

* @license

|

||||

* Copyright 2025 Qwen

|

||||

* SPDX-License-Identifier: Apache-2.0

|

||||

*/

|

||||

|

||||

import { describe, it, expect, vi, beforeEach } from 'vitest';

|

||||

import { Session } from './Session.js';

|

||||

import type { Config, GeminiChat } from '@qwen-code/qwen-code-core';

|

||||

import { ApprovalMode } from '@qwen-code/qwen-code-core';

|

||||

import type * as acp from '../acp.js';

|

||||

import type { LoadedSettings } from '../../config/settings.js';

|

||||

import * as nonInteractiveCliCommands from '../../nonInteractiveCliCommands.js';

|

||||

|

||||

vi.mock('../../nonInteractiveCliCommands.js', () => ({

|

||||

getAvailableCommands: vi.fn(),

|

||||

handleSlashCommand: vi.fn(),

|

||||

}));

|

||||

|

||||

describe('Session', () => {

|

||||

let mockChat: GeminiChat;

|

||||

let mockConfig: Config;

|

||||

let mockClient: acp.Client;

|

||||

let mockSettings: LoadedSettings;

|

||||

let session: Session;

|

||||

let currentModel: string;

|

||||

let setModelSpy: ReturnType<typeof vi.fn>;

|

||||

let getAvailableCommandsSpy: ReturnType<typeof vi.fn>;

|

||||

|

||||

beforeEach(() => {

|

||||

currentModel = 'qwen3-code-plus';

|

||||

setModelSpy = vi.fn().mockImplementation(async (modelId: string) => {

|

||||

currentModel = modelId;

|

||||

});

|

||||

|

||||

mockChat = {

|

||||

sendMessageStream: vi.fn(),

|

||||

addHistory: vi.fn(),

|

||||

} as unknown as GeminiChat;

|

||||

|

||||

mockConfig = {

|

||||

setApprovalMode: vi.fn(),

|

||||

setModel: setModelSpy,

|

||||

getModel: vi.fn().mockImplementation(() => currentModel),

|

||||

} as unknown as Config;

|

||||

|

||||

mockClient = {

|

||||

sessionUpdate: vi.fn().mockResolvedValue(undefined),

|

||||

requestPermission: vi.fn().mockResolvedValue({

|

||||

outcome: { outcome: 'selected', optionId: 'proceed_once' },

|

||||

}),

|

||||

sendCustomNotification: vi.fn().mockResolvedValue(undefined),

|

||||

} as unknown as acp.Client;

|

||||

|

||||

mockSettings = {

|

||||

merged: {},

|

||||

} as LoadedSettings;

|

||||

|

||||

getAvailableCommandsSpy = vi.mocked(nonInteractiveCliCommands)

|

||||

.getAvailableCommands as unknown as ReturnType<typeof vi.fn>;

|

||||

getAvailableCommandsSpy.mockResolvedValue([]);

|

||||

|

||||

session = new Session(

|

||||

'test-session-id',

|

||||

mockChat,

|

||||

mockConfig,

|

||||

mockClient,

|

||||

mockSettings,

|

||||

);

|

||||

});

|

||||

|

||||

describe('setMode', () => {

|

||||

it.each([

|

||||

['plan', ApprovalMode.PLAN],

|

||||

['default', ApprovalMode.DEFAULT],

|

||||

['auto-edit', ApprovalMode.AUTO_EDIT],

|

||||

['yolo', ApprovalMode.YOLO],

|

||||

] as const)('maps %s mode', async (modeId, expected) => {

|

||||

const result = await session.setMode({

|

||||

sessionId: 'test-session-id',

|

||||

modeId,

|

||||

});

|

||||

|

||||

expect(mockConfig.setApprovalMode).toHaveBeenCalledWith(expected);

|

||||

expect(result).toEqual({ modeId });

|

||||

});

|

||||

});

|

||||

|

||||

describe('setModel', () => {

|

||||

it('sets model via config and returns current model', async () => {

|

||||

const result = await session.setModel({

|

||||

sessionId: 'test-session-id',

|

||||

modelId: ' qwen3-coder-plus ',

|

||||

});

|

||||

|

||||

expect(mockConfig.setModel).toHaveBeenCalledWith('qwen3-coder-plus', {

|

||||

reason: 'user_request_acp',

|

||||

context: 'session/set_model',

|

||||

});

|

||||

expect(mockConfig.getModel).toHaveBeenCalled();

|

||||

expect(result).toEqual({ modelId: 'qwen3-coder-plus' });

|

||||

});

|

||||

|

||||

it('rejects empty/whitespace model IDs', async () => {

|

||||

await expect(

|

||||

session.setModel({

|

||||

sessionId: 'test-session-id',

|

||||

modelId: ' ',

|

||||

}),

|

||||

).rejects.toThrow('Invalid params');

|

||||

|

||||

expect(mockConfig.setModel).not.toHaveBeenCalled();

|

||||

});

|

||||

|

||||

it('propagates errors from config.setModel', async () => {

|

||||

const configError = new Error('Invalid model');

|

||||

setModelSpy.mockRejectedValueOnce(configError);

|

||||

|

||||

await expect(

|

||||

session.setModel({

|

||||

sessionId: 'test-session-id',

|

||||

modelId: 'invalid-model',

|

||||

}),

|

||||

).rejects.toThrow('Invalid model');

|

||||

});

|

||||

});

|

||||

|

||||

describe('sendAvailableCommandsUpdate', () => {

|

||||

it('sends available_commands_update from getAvailableCommands()', async () => {

|

||||

getAvailableCommandsSpy.mockResolvedValueOnce([

|

||||

{

|

||||

name: 'init',

|

||||

description: 'Initialize project context',

|

||||

},

|

||||

]);

|

||||

|

||||

await session.sendAvailableCommandsUpdate();

|

||||

|

||||

expect(getAvailableCommandsSpy).toHaveBeenCalledWith(

|

||||

mockConfig,

|

||||

expect.any(AbortSignal),

|

||||

);

|

||||

expect(mockClient.sessionUpdate).toHaveBeenCalledWith({

|

||||

sessionId: 'test-session-id',

|

||||

update: {

|

||||

sessionUpdate: 'available_commands_update',

|

||||

availableCommands: [

|

||||

{

|

||||

name: 'init',

|

||||

description: 'Initialize project context',

|

||||

input: null,

|

||||

},

|

||||

],

|

||||

},

|

||||

});

|

||||

});

|

||||

|

||||

it('swallows errors and does not throw', async () => {

|

||||

const consoleErrorSpy = vi

|

||||

.spyOn(console, 'error')

|

||||

.mockImplementation(() => undefined);

|

||||

getAvailableCommandsSpy.mockRejectedValueOnce(

|

||||

new Error('Command discovery failed'),

|

||||

);

|

||||

|

||||

await expect(

|

||||

session.sendAvailableCommandsUpdate(),

|

||||

).resolves.toBeUndefined();

|

||||

expect(mockClient.sessionUpdate).not.toHaveBeenCalled();

|

||||

expect(consoleErrorSpy).toHaveBeenCalled();

|

||||

consoleErrorSpy.mockRestore();

|

||||

});

|

||||

});

|

||||

});

|

||||

@@ -52,8 +52,6 @@ import type {

|

||||

AvailableCommandsUpdate,

|

||||

SetModeRequest,

|

||||

SetModeResponse,

|

||||

SetModelRequest,

|

||||

SetModelResponse,

|

||||

ApprovalModeValue,

|

||||

CurrentModeUpdate,

|

||||

} from '../schema.js';

|

||||

@@ -350,31 +348,6 @@ export class Session implements SessionContext {

|

||||

return { modeId: params.modeId };

|

||||

}

|

||||

|

||||

/**

|

||||

* Sets the model for the current session.

|

||||

* Validates the model ID and switches the model via Config.

|

||||

*/

|

||||

async setModel(params: SetModelRequest): Promise<SetModelResponse> {

|

||||

const modelId = params.modelId.trim();

|

||||

|

||||

if (!modelId) {

|

||||

throw acp.RequestError.invalidParams('modelId cannot be empty');

|

||||

}

|

||||

|

||||

// Attempt to set the model using config

|

||||

await this.config.setModel(modelId, {

|

||||

reason: 'user_request_acp',

|

||||

context: 'session/set_model',

|

||||

});

|

||||

|

||||

// Get updated model info

|

||||

const currentModel = this.config.getModel();

|

||||

|

||||

return {

|

||||

modelId: currentModel,

|

||||

};

|

||||

}

|

||||

|

||||

/**

|

||||

* Sends a current_mode_update notification to the client.

|

||||

* Called after the agent switches modes (e.g., from exit_plan_mode tool).

|

||||

|

||||

@@ -1196,11 +1196,6 @@ describe('Hierarchical Memory Loading (config.ts) - Placeholder Suite', () => {

|

||||

],

|

||||

true,

|

||||

'tree',

|

||||

{

|

||||

respectGitIgnore: false,

|

||||

respectQwenIgnore: true,

|

||||

},

|

||||

undefined, // maxDirs

|

||||

);

|

||||

});

|

||||

|

||||

|

||||

@@ -9,7 +9,6 @@ import {

|

||||

AuthType,

|

||||

Config,

|

||||

DEFAULT_QWEN_EMBEDDING_MODEL,

|

||||

DEFAULT_MEMORY_FILE_FILTERING_OPTIONS,

|

||||

FileDiscoveryService,

|

||||

getCurrentGeminiMdFilename,

|

||||

loadServerHierarchicalMemory,

|

||||

@@ -22,7 +21,6 @@ import {

|

||||

isToolEnabled,

|

||||

SessionService,

|

||||

type ResumedSessionData,

|

||||

type FileFilteringOptions,

|

||||

type MCPServerConfig,

|

||||

type ToolName,

|

||||

EditTool,

|

||||

@@ -643,7 +641,6 @@ export async function loadHierarchicalGeminiMemory(

|

||||

extensionContextFilePaths: string[] = [],

|

||||

folderTrust: boolean,

|

||||

memoryImportFormat: 'flat' | 'tree' = 'tree',

|

||||

fileFilteringOptions?: FileFilteringOptions,

|

||||

): Promise<{ memoryContent: string; fileCount: number }> {

|

||||

// FIX: Use real, canonical paths for a reliable comparison to handle symlinks.

|

||||

const realCwd = fs.realpathSync(path.resolve(currentWorkingDirectory));

|

||||

@@ -669,8 +666,6 @@ export async function loadHierarchicalGeminiMemory(

|

||||

extensionContextFilePaths,

|

||||

folderTrust,

|

||||

memoryImportFormat,

|

||||

fileFilteringOptions,

|

||||

settings.context?.discoveryMaxDirs,

|

||||

);

|

||||

}

|

||||

|

||||

@@ -740,11 +735,6 @@ export async function loadCliConfig(

|

||||

|

||||

const fileService = new FileDiscoveryService(cwd);

|

||||

|

||||

const fileFiltering = {

|

||||

...DEFAULT_MEMORY_FILE_FILTERING_OPTIONS,

|

||||

...settings.context?.fileFiltering,

|

||||

};

|

||||

|

||||

const includeDirectories = (settings.context?.includeDirectories || [])

|

||||

.map(resolvePath)

|

||||

.concat((argv.includeDirectories || []).map(resolvePath));

|

||||

@@ -761,7 +751,6 @@ export async function loadCliConfig(

|

||||

extensionContextFilePaths,

|

||||

trustedFolder,

|

||||

memoryImportFormat,

|

||||

fileFiltering,

|

||||

);

|

||||

|

||||

let mcpServers = mergeMcpServers(settings, activeExtensions);

|

||||

|

||||

@@ -106,7 +106,6 @@ const MIGRATION_MAP: Record<string, string> = {

|

||||

mcpServers: 'mcpServers',

|

||||

mcpServerCommand: 'mcp.serverCommand',

|

||||

memoryImportFormat: 'context.importFormat',

|

||||

memoryDiscoveryMaxDirs: 'context.discoveryMaxDirs',

|

||||

model: 'model.name',

|

||||

preferredEditor: 'general.preferredEditor',

|

||||

sandbox: 'tools.sandbox',

|

||||

|

||||

@@ -690,6 +690,18 @@ const SETTINGS_SCHEMA = {

|

||||

{ value: 'openapi_30', label: 'OpenAPI 3.0 Strict' },

|

||||

],

|

||||

},

|

||||

contextWindowSize: {

|

||||

type: 'number',

|

||||

label: 'Context Window Size',

|

||||

category: 'Generation Configuration',

|

||||

requiresRestart: false,

|

||||

default: -1,

|

||||

description:

|

||||

'Override the automatic context window size detection. Set to -1 to use automatic detection based on the model. Set to a positive number to use a custom context window size.',

|

||||

parentKey: 'generationConfig',

|

||||

childKey: 'contextWindowSize',

|

||||

showInDialog: true,

|

||||

},

|

||||

},

|

||||

},

|

||||

},

|

||||

@@ -722,15 +734,6 @@ const SETTINGS_SCHEMA = {

|

||||

description: 'The format to use when importing memory.',

|

||||

showInDialog: false,

|

||||

},

|

||||

discoveryMaxDirs: {

|

||||

type: 'number',

|

||||

label: 'Memory Discovery Max Dirs',

|

||||

category: 'Context',

|

||||

requiresRestart: false,

|

||||

default: 200,

|

||||

description: 'Maximum number of directories to search for memory.',

|

||||

showInDialog: true,

|

||||

},

|

||||

includeDirectories: {

|

||||

type: 'array',

|

||||

label: 'Include Directories',

|

||||

|

||||

@@ -575,7 +575,6 @@ export const AppContainer = (props: AppContainerProps) => {

|

||||

config.getExtensionContextFilePaths(),

|

||||

config.isTrustedFolder(),

|

||||

settings.merged.context?.importFormat || 'tree', // Use setting or default to 'tree'

|

||||

config.getFileFilteringOptions(),

|

||||

);

|

||||

|

||||

config.setUserMemory(memoryContent);

|

||||

|

||||

@@ -54,9 +54,7 @@ describe('directoryCommand', () => {

|

||||

services: {

|

||||

config: mockConfig,

|

||||

settings: {

|

||||

merged: {

|

||||

memoryDiscoveryMaxDirs: 1000,

|

||||

},

|

||||

merged: {},

|

||||

},

|

||||

},

|

||||

ui: {

|

||||

|

||||

@@ -119,8 +119,6 @@ export const directoryCommand: SlashCommand = {

|

||||

config.getFolderTrust(),

|

||||

context.services.settings.merged.context?.importFormat ||

|

||||

'tree', // Use setting or default to 'tree'

|

||||

config.getFileFilteringOptions(),

|

||||

context.services.settings.merged.context?.discoveryMaxDirs,

|

||||

);

|

||||

config.setUserMemory(memoryContent);

|

||||

config.setGeminiMdFileCount(fileCount);

|

||||

|

||||

@@ -299,9 +299,7 @@ describe('memoryCommand', () => {

|

||||

services: {

|

||||

config: mockConfig,

|

||||

settings: {

|

||||

merged: {

|

||||

memoryDiscoveryMaxDirs: 1000,

|

||||

},

|

||||

merged: {},

|

||||

} as LoadedSettings,

|

||||

},

|

||||

});

|

||||

|

||||

@@ -315,8 +315,6 @@ export const memoryCommand: SlashCommand = {

|

||||

config.getFolderTrust(),

|

||||

context.services.settings.merged.context?.importFormat ||

|

||||

'tree', // Use setting or default to 'tree'

|

||||

config.getFileFilteringOptions(),

|

||||

context.services.settings.merged.context?.discoveryMaxDirs,

|

||||

);

|

||||

config.setUserMemory(memoryContent);

|

||||

config.setGeminiMdFileCount(fileCount);

|

||||

|

||||

@@ -6,18 +6,22 @@

|

||||

|

||||

import { Text } from 'ink';

|

||||

import { theme } from '../semantic-colors.js';

|

||||

import { tokenLimit } from '@qwen-code/qwen-code-core';

|

||||

import { tokenLimit, type Config } from '@qwen-code/qwen-code-core';

|

||||

|

||||

export const ContextUsageDisplay = ({

|

||||

promptTokenCount,

|

||||

model,

|

||||

terminalWidth,

|

||||

config,

|

||||

}: {

|

||||

promptTokenCount: number;

|

||||

model: string;

|

||||

terminalWidth: number;

|

||||

config: Config;

|

||||

}) => {

|

||||

const percentage = promptTokenCount / tokenLimit(model);

|

||||

const contentGeneratorConfig = config.getContentGeneratorConfig();

|

||||

const contextLimit = tokenLimit(model, 'input', contentGeneratorConfig);

|

||||

const percentage = promptTokenCount / contextLimit;

|

||||

const percentageLeft = ((1 - percentage) * 100).toFixed(0);

|

||||

|

||||

const label = terminalWidth < 100 ? '%' : '% context left';

|

||||

|

||||

@@ -43,6 +43,7 @@ const createMockConfig = (overrides = {}) => ({

|

||||

getModel: vi.fn(() => defaultProps.model),

|

||||

getTargetDir: vi.fn(() => defaultProps.targetDir),

|

||||

getDebugMode: vi.fn(() => false),

|

||||

getContentGeneratorConfig: vi.fn(() => ({})),

|

||||

...overrides,

|

||||

});

|

||||

|

||||

|

||||

@@ -146,6 +146,7 @@ export const Footer: React.FC = () => {

|

||||

promptTokenCount={promptTokenCount}

|

||||

model={model}

|

||||

terminalWidth={terminalWidth}

|

||||

config={config}

|

||||

/>

|

||||

</Text>

|

||||

{showMemoryUsage && <MemoryUsageDisplay />}

|

||||

|

||||

@@ -1331,9 +1331,7 @@ describe('SettingsDialog', () => {

|

||||

truncateToolOutputThreshold: 50000,

|

||||

truncateToolOutputLines: 1000,

|

||||

},

|

||||

context: {

|

||||

discoveryMaxDirs: 500,

|

||||

},

|

||||

context: {},

|

||||

model: {

|

||||

maxSessionTurns: 100,

|

||||

skipNextSpeakerCheck: false,

|

||||

@@ -1466,7 +1464,6 @@ describe('SettingsDialog', () => {

|

||||

disableFuzzySearch: true,

|

||||

},

|

||||

loadMemoryFromIncludeDirectories: true,

|

||||

discoveryMaxDirs: 100,

|

||||

},

|

||||

});

|

||||

const onSelect = vi.fn();

|

||||

|

||||

@@ -91,6 +91,9 @@ export type ContentGeneratorConfig = {

|

||||

userAgent?: string;

|

||||

// Schema compliance mode for tool definitions

|

||||

schemaCompliance?: 'auto' | 'openapi_30';

|

||||

// Context window size override. If set to a positive number, it will override

|

||||

// the automatic detection. Set to -1 to use automatic detection.

|

||||

contextWindowSize?: number;

|

||||

// Custom HTTP headers to be sent with requests

|

||||

customHeaders?: Record<string, string>;

|

||||

};

|

||||

|

||||

@@ -224,14 +224,29 @@ const OUTPUT_PATTERNS: Array<[RegExp, TokenCount]> = [

|

||||

* or output generation based on the model and token type. It uses the same

|

||||

* normalization logic for consistency across both input and output limits.

|

||||

*

|

||||

* If a contentGeneratorConfig is provided with a contextWindowSize > 0, that value

|

||||

* will be used for input token limits instead of the automatic detection.

|

||||

*

|

||||

* @param model - The model name to get the token limit for

|

||||

* @param type - The type of token limit ('input' for context window, 'output' for generation)

|

||||

* @param contentGeneratorConfig - Optional config that may contain a contextWindowSize override

|

||||

* @returns The maximum number of tokens allowed for this model and type

|

||||

*/

|

||||

export function tokenLimit(

|

||||

model: Model,

|

||||

type: TokenLimitType = 'input',

|

||||

contentGeneratorConfig?: { contextWindowSize?: number },

|

||||

): TokenCount {

|

||||

// If user configured a specific context window size for input, use it

|

||||

const configuredLimit = contentGeneratorConfig?.contextWindowSize;

|

||||

if (

|

||||

type === 'input' &&

|

||||

configuredLimit !== undefined &&

|

||||

configuredLimit > 0

|

||||

) {

|

||||

return configuredLimit;

|

||||

}

|

||||

|

||||

const norm = normalize(model);

|

||||

|

||||

// Choose the appropriate patterns based on token type

|

||||

|

||||

@@ -25,6 +25,7 @@ export const MODEL_GENERATION_CONFIG_FIELDS = [

|

||||

'disableCacheControl',

|

||||

'schemaCompliance',

|

||||

'reasoning',

|

||||

'contextWindowSize',

|

||||

'customHeaders',

|

||||

] as const satisfies ReadonlyArray<keyof ContentGeneratorConfig>;

|

||||

|

||||

|

||||

@@ -118,6 +118,7 @@ describe('ChatCompressionService', () => {

|

||||

mockConfig = {

|

||||

getChatCompression: vi.fn(),

|

||||

getContentGenerator: vi.fn(),

|

||||

getContentGeneratorConfig: vi.fn().mockReturnValue({}),

|

||||

} as unknown as Config;

|

||||

|

||||

vi.mocked(tokenLimit).mockReturnValue(1000);

|

||||

|

||||

@@ -110,7 +110,9 @@ export class ChatCompressionService {

|

||||

|

||||

// Don't compress if not forced and we are under the limit.

|

||||

if (!force) {

|

||||

if (originalTokenCount < threshold * tokenLimit(model)) {

|

||||

const contentGeneratorConfig = config.getContentGeneratorConfig();

|

||||

const contextLimit = tokenLimit(model, 'input', contentGeneratorConfig);

|

||||

if (originalTokenCount < threshold * contextLimit) {

|

||||

return {

|

||||

newHistory: null,

|

||||

info: {

|

||||

|

||||

@@ -112,6 +112,62 @@ You are a helpful assistant with this skill.

|

||||

expect(config.filePath).toBe(validSkillConfig.filePath);

|

||||

});

|

||||

|

||||

it('should parse markdown with CRLF line endings', () => {

|

||||

const markdownCrlf = `---\r

|

||||

name: test-skill\r

|

||||

description: A test skill\r

|

||||

---\r

|

||||

\r

|

||||

You are a helpful assistant with this skill.\r

|

||||

`;

|

||||

|

||||

const config = manager.parseSkillContent(

|

||||

markdownCrlf,

|

||||

validSkillConfig.filePath,

|

||||

'project',

|

||||

);

|

||||

|

||||

expect(config.name).toBe('test-skill');

|

||||

expect(config.description).toBe('A test skill');

|

||||

expect(config.body).toBe('You are a helpful assistant with this skill.');

|

||||

});

|

||||

|

||||

it('should parse markdown with UTF-8 BOM', () => {

|

||||

const markdownWithBom = `\uFEFF---

|

||||

name: test-skill

|

||||

description: A test skill

|

||||

---

|

||||

|

||||

You are a helpful assistant with this skill.

|

||||

`;

|

||||

|

||||

const config = manager.parseSkillContent(

|

||||

markdownWithBom,

|

||||

validSkillConfig.filePath,

|

||||

'project',

|

||||

);

|

||||

|

||||

expect(config.name).toBe('test-skill');

|

||||

expect(config.description).toBe('A test skill');

|

||||

});

|

||||

|

||||

it('should parse markdown when body is empty and file ends after frontmatter', () => {

|

||||

const frontmatterOnly = `---

|

||||

name: test-skill

|

||||

description: A test skill

|

||||

---`;

|

||||

|

||||

const config = manager.parseSkillContent(

|

||||

frontmatterOnly,

|

||||

validSkillConfig.filePath,

|

||||

'project',

|

||||

);

|

||||

|

||||

expect(config.name).toBe('test-skill');

|

||||

expect(config.description).toBe('A test skill');

|

||||

expect(config.body).toBe('');

|

||||

});

|

||||

|

||||

it('should parse content with allowedTools', () => {

|

||||

const markdownWithTools = `---

|

||||

name: test-skill

|

||||

|

||||

@@ -307,9 +307,11 @@ export class SkillManager {

|

||||

level: SkillLevel,

|

||||

): SkillConfig {

|

||||

try {

|

||||

const normalizedContent = normalizeSkillFileContent(content);

|

||||

|

||||

// Split frontmatter and content

|

||||

const frontmatterRegex = /^---\n([\s\S]*?)\n---\n([\s\S]*)$/;

|

||||

const match = content.match(frontmatterRegex);

|

||||

const frontmatterRegex = /^---\n([\s\S]*?)\n---(?:\n|$)([\s\S]*)$/;

|

||||

const match = normalizedContent.match(frontmatterRegex);

|

||||

|

||||

if (!match) {

|

||||

throw new Error('Invalid format: missing YAML frontmatter');

|

||||

@@ -556,3 +558,13 @@ export class SkillManager {

|

||||

}

|

||||

}

|

||||

}

|

||||

|

||||

function normalizeSkillFileContent(content: string): string {

|

||||

// Strip UTF-8 BOM to ensure frontmatter starts at the first character.

|

||||

let normalized = content.replace(/^\uFEFF/, '');

|

||||

|

||||

// Normalize line endings so skills authored on Windows (CRLF) parse correctly.

|

||||

normalized = normalized.replace(/\r\n/g, '\n').replace(/\r/g, '\n');

|

||||

|

||||

return normalized;

|

||||

}

|

||||

|

||||

@@ -1,232 +0,0 @@

|

||||

/**

|

||||

* @license

|

||||

* Copyright 2025 Google LLC

|

||||

* SPDX-License-Identifier: Apache-2.0

|

||||

*/

|

||||

|

||||

import { describe, it, expect, beforeEach, afterEach } from 'vitest';

|

||||

import * as fsPromises from 'node:fs/promises';

|

||||

import * as path from 'node:path';

|

||||

import * as os from 'node:os';

|

||||

import { bfsFileSearch } from './bfsFileSearch.js';

|

||||

import { FileDiscoveryService } from '../services/fileDiscoveryService.js';

|

||||

|

||||

describe('bfsFileSearch', () => {

|

||||

let testRootDir: string;

|

||||

|

||||

async function createEmptyDir(...pathSegments: string[]) {

|

||||

const fullPath = path.join(testRootDir, ...pathSegments);

|

||||

await fsPromises.mkdir(fullPath, { recursive: true });

|

||||

return fullPath;

|

||||

}

|

||||

|

||||

async function createTestFile(content: string, ...pathSegments: string[]) {

|

||||

const fullPath = path.join(testRootDir, ...pathSegments);

|

||||

await fsPromises.mkdir(path.dirname(fullPath), { recursive: true });

|

||||

await fsPromises.writeFile(fullPath, content);

|

||||

return fullPath;

|

||||

}

|

||||

|

||||

beforeEach(async () => {

|

||||

testRootDir = await fsPromises.mkdtemp(

|

||||

path.join(os.tmpdir(), 'bfs-file-search-test-'),

|

||||

);

|

||||

});

|

||||

|

||||

afterEach(async () => {

|

||||

await fsPromises.rm(testRootDir, { recursive: true, force: true });

|

||||

});

|

||||

|

||||

it('should find a file in the root directory', async () => {

|

||||

const targetFilePath = await createTestFile('content', 'target.txt');

|

||||

const result = await bfsFileSearch(testRootDir, { fileName: 'target.txt' });

|

||||

expect(result).toEqual([targetFilePath]);

|

||||

});

|

||||

|

||||

it('should find a file in a nested directory', async () => {

|

||||

const targetFilePath = await createTestFile(

|

||||

'content',

|

||||

'a',

|

||||

'b',

|

||||

'target.txt',

|

||||

);

|

||||

const result = await bfsFileSearch(testRootDir, { fileName: 'target.txt' });

|

||||

expect(result).toEqual([targetFilePath]);

|

||||

});

|

||||

|

||||

it('should find multiple files with the same name', async () => {

|

||||

const targetFilePath1 = await createTestFile('content1', 'a', 'target.txt');

|

||||

const targetFilePath2 = await createTestFile('content2', 'b', 'target.txt');

|

||||

const result = await bfsFileSearch(testRootDir, { fileName: 'target.txt' });

|

||||

result.sort();

|

||||

expect(result).toEqual([targetFilePath1, targetFilePath2].sort());

|

||||

});

|

||||

|

||||

it('should return an empty array if no file is found', async () => {

|

||||

await createTestFile('content', 'other.txt');

|

||||

const result = await bfsFileSearch(testRootDir, { fileName: 'target.txt' });

|

||||

expect(result).toEqual([]);

|

||||

});

|

||||

|

||||

it('should ignore directories specified in ignoreDirs', async () => {

|

||||

await createTestFile('content', 'ignored', 'target.txt');

|

||||

const targetFilePath = await createTestFile(

|

||||

'content',

|

||||

'not-ignored',

|

||||

'target.txt',

|

||||

);

|

||||

const result = await bfsFileSearch(testRootDir, {

|

||||

fileName: 'target.txt',

|

||||

ignoreDirs: ['ignored'],

|

||||

});

|

||||

expect(result).toEqual([targetFilePath]);

|

||||

});

|

||||

|

||||

it('should respect the maxDirs limit and not find the file', async () => {

|

||||

await createTestFile('content', 'a', 'b', 'c', 'target.txt');

|

||||

const result = await bfsFileSearch(testRootDir, {

|

||||

fileName: 'target.txt',

|

||||

maxDirs: 3,

|

||||

});

|

||||

expect(result).toEqual([]);

|

||||

});

|

||||

|

||||

it('should respect the maxDirs limit and find the file', async () => {

|

||||

const targetFilePath = await createTestFile(

|

||||

'content',

|

||||

'a',

|

||||

'b',

|

||||

'c',

|

||||

'target.txt',

|

||||

);

|

||||

const result = await bfsFileSearch(testRootDir, {

|

||||

fileName: 'target.txt',

|

||||

maxDirs: 4,

|

||||

});

|

||||

expect(result).toEqual([targetFilePath]);

|

||||

});

|

||||

|

||||

describe('with FileDiscoveryService', () => {

|

||||

let projectRoot: string;

|

||||

|

||||

beforeEach(async () => {

|

||||

projectRoot = await createEmptyDir('project');

|

||||

});

|

||||

|

||||

it('should ignore gitignored files', async () => {

|

||||

await createEmptyDir('project', '.git');

|

||||

await createTestFile('node_modules/', 'project', '.gitignore');

|

||||

await createTestFile('content', 'project', 'node_modules', 'target.txt');

|

||||

const targetFilePath = await createTestFile(

|

||||

'content',

|

||||

'project',

|

||||

'not-ignored',

|

||||

'target.txt',

|

||||

);

|

||||

|

||||

const fileService = new FileDiscoveryService(projectRoot);

|

||||

const result = await bfsFileSearch(projectRoot, {

|

||||

fileName: 'target.txt',

|

||||

fileService,

|

||||

fileFilteringOptions: {

|

||||

respectGitIgnore: true,

|

||||

respectQwenIgnore: true,

|

||||

},

|

||||

});

|

||||

|

||||

expect(result).toEqual([targetFilePath]);

|

||||

});

|

||||

|

||||

it('should ignore qwenignored files', async () => {

|

||||

await createTestFile('node_modules/', 'project', '.qwenignore');

|

||||

await createTestFile('content', 'project', 'node_modules', 'target.txt');

|

||||

const targetFilePath = await createTestFile(

|

||||

'content',

|

||||

'project',

|

||||

'not-ignored',

|

||||

'target.txt',

|

||||

);

|

||||

|

||||

const fileService = new FileDiscoveryService(projectRoot);

|

||||

const result = await bfsFileSearch(projectRoot, {

|

||||

fileName: 'target.txt',

|

||||

fileService,

|

||||

fileFilteringOptions: {

|

||||

respectGitIgnore: false,

|

||||

respectQwenIgnore: true,

|

||||

},

|

||||

});

|

||||

|

||||

expect(result).toEqual([targetFilePath]);

|

||||

});

|

||||

|

||||

it('should not ignore files if respect flags are false', async () => {

|

||||

await createEmptyDir('project', '.git');

|

||||

await createTestFile('node_modules/', 'project', '.gitignore');

|

||||

const target1 = await createTestFile(

|

||||

'content',

|

||||

'project',

|

||||

'node_modules',

|

||||

'target.txt',

|

||||

);

|

||||

const target2 = await createTestFile(

|

||||

'content',

|

||||

'project',

|

||||

'not-ignored',

|

||||

'target.txt',

|

||||

);

|

||||

|

||||

const fileService = new FileDiscoveryService(projectRoot);

|

||||

const result = await bfsFileSearch(projectRoot, {

|

||||

fileName: 'target.txt',

|

||||

fileService,

|

||||

fileFilteringOptions: {

|

||||

respectGitIgnore: false,

|

||||

respectQwenIgnore: false,

|

||||

},

|

||||

});

|

||||

|

||||

expect(result.sort()).toEqual([target1, target2].sort());

|

||||

});

|

||||

});

|

||||

|

||||

it('should find all files in a complex directory structure', async () => {

|

||||

// Create a complex directory structure to test correctness at scale

|

||||

// without flaky performance checks.

|

||||

const numDirs = 50;

|

||||

const numFilesPerDir = 2;

|

||||

const numTargetDirs = 10;

|

||||

|

||||

const dirCreationPromises: Array<Promise<unknown>> = [];

|

||||

for (let i = 0; i < numDirs; i++) {

|

||||

dirCreationPromises.push(createEmptyDir(`dir${i}`));

|

||||

dirCreationPromises.push(createEmptyDir(`dir${i}`, 'subdir1'));

|

||||

dirCreationPromises.push(createEmptyDir(`dir${i}`, 'subdir2'));

|

||||

dirCreationPromises.push(createEmptyDir(`dir${i}`, 'subdir1', 'deep'));

|

||||

}

|

||||

await Promise.all(dirCreationPromises);

|

||||

|

||||

const fileCreationPromises: Array<Promise<string>> = [];

|

||||

for (let i = 0; i < numTargetDirs; i++) {

|

||||

// Add target files in some directories

|

||||

fileCreationPromises.push(

|

||||

createTestFile('content', `dir${i}`, 'QWEN.md'),

|

||||

);

|

||||

fileCreationPromises.push(

|

||||

createTestFile('content', `dir${i}`, 'subdir1', 'QWEN.md'),

|

||||

);

|

||||

}

|

||||

const expectedFiles = await Promise.all(fileCreationPromises);

|

||||

|

||||

const result = await bfsFileSearch(testRootDir, {

|

||||

fileName: 'QWEN.md',

|

||||

// Provide a generous maxDirs limit to ensure it doesn't prematurely stop

|

||||

// in this large test case. Total dirs created is 200.

|

||||

maxDirs: 250,

|

||||

});

|

||||

|

||||

// Verify we found the exact files we created

|

||||

expect(result.length).toBe(numTargetDirs * numFilesPerDir);

|

||||

expect(result.sort()).toEqual(expectedFiles.sort());

|

||||

});

|

||||

});

|

||||

@@ -1,131 +0,0 @@

|

||||

/**

|

||||

* @license

|

||||

* Copyright 2025 Google LLC

|

||||

* SPDX-License-Identifier: Apache-2.0

|

||||

*/

|

||||

|

||||

import * as fs from 'node:fs/promises';

|

||||

import * as path from 'node:path';

|

||||

import type { FileDiscoveryService } from '../services/fileDiscoveryService.js';

|

||||

import type { FileFilteringOptions } from '../config/constants.js';

|

||||

// Simple console logger for now.

|

||||

// TODO: Integrate with a more robust server-side logger.

|

||||

const logger = {

|

||||

// eslint-disable-next-line @typescript-eslint/no-explicit-any

|

||||

debug: (...args: any[]) => console.debug('[DEBUG] [BfsFileSearch]', ...args),

|

||||

};

|

||||

|

||||

interface BfsFileSearchOptions {

|

||||

fileName: string;

|

||||

ignoreDirs?: string[];

|

||||

maxDirs?: number;

|

||||

debug?: boolean;

|

||||

fileService?: FileDiscoveryService;

|

||||

fileFilteringOptions?: FileFilteringOptions;

|

||||

}

|

||||

|

||||

/**

|

||||

* Performs a breadth-first search for a specific file within a directory structure.

|

||||

*

|

||||

* @param rootDir The directory to start the search from.

|

||||

* @param options Configuration for the search.

|

||||

* @returns A promise that resolves to an array of paths where the file was found.

|

||||

*/

|

||||

export async function bfsFileSearch(

|

||||

rootDir: string,

|

||||

options: BfsFileSearchOptions,

|

||||

): Promise<string[]> {

|

||||

const {

|

||||

fileName,

|

||||

ignoreDirs = [],

|

||||

maxDirs = Infinity,

|

||||

debug = false,

|

||||

fileService,

|

||||

} = options;

|

||||

const foundFiles: string[] = [];

|

||||

const queue: string[] = [rootDir];

|

||||

const visited = new Set<string>();

|

||||

let scannedDirCount = 0;

|

||||

let queueHead = 0; // Pointer-based queue head to avoid expensive splice operations

|

||||

|

||||

// Convert ignoreDirs array to Set for O(1) lookup performance

|

||||

const ignoreDirsSet = new Set(ignoreDirs);

|

||||

|

||||

// Process directories in parallel batches for maximum performance

|

||||

const PARALLEL_BATCH_SIZE = 15; // Parallel processing batch size for optimal performance

|

||||

|

||||

while (queueHead < queue.length && scannedDirCount < maxDirs) {

|

||||

// Fill batch with unvisited directories up to the desired size

|

||||

const batchSize = Math.min(PARALLEL_BATCH_SIZE, maxDirs - scannedDirCount);

|

||||

const currentBatch = [];

|

||||

while (currentBatch.length < batchSize && queueHead < queue.length) {

|

||||

const currentDir = queue[queueHead];

|

||||

queueHead++;

|

||||

if (!visited.has(currentDir)) {

|

||||

visited.add(currentDir);

|

||||

currentBatch.push(currentDir);

|

||||

}

|

||||

}

|

||||

scannedDirCount += currentBatch.length;

|

||||

|

||||

if (currentBatch.length === 0) continue;

|

||||

|

||||

if (debug) {

|

||||

logger.debug(

|

||||

`Scanning [${scannedDirCount}/${maxDirs}]: batch of ${currentBatch.length}`,

|

||||

);

|

||||

}

|

||||

|

||||

// Read directories in parallel instead of one by one

|

||||

const readPromises = currentBatch.map(async (currentDir) => {

|

||||

try {

|

||||

const entries = await fs.readdir(currentDir, { withFileTypes: true });

|

||||

return { currentDir, entries };

|

||||

} catch (error) {

|

||||

// Warn user that a directory could not be read, as this affects search results.

|

||||

const message = (error as Error)?.message ?? 'Unknown error';

|

||||

console.warn(

|

||||

`[WARN] Skipping unreadable directory: ${currentDir} (${message})`,

|

||||

);

|

||||

if (debug) {

|

||||

logger.debug(`Full error for ${currentDir}:`, error);

|

||||

}

|

||||

return { currentDir, entries: [] };

|

||||

}

|

||||

});

|

||||

|

||||

const results = await Promise.all(readPromises);

|

||||

|

||||

for (const { currentDir, entries } of results) {

|

||||

for (const entry of entries) {

|

||||

const fullPath = path.join(currentDir, entry.name);

|

||||

const isDirectory = entry.isDirectory();

|

||||

const isMatchingFile = entry.isFile() && entry.name === fileName;

|

||||

|

||||

if (!isDirectory && !isMatchingFile) {

|

||||

continue;

|

||||

}

|

||||

if (isDirectory && ignoreDirsSet.has(entry.name)) {

|

||||

continue;

|

||||

}

|

||||

|

||||

if (

|

||||

fileService?.shouldIgnoreFile(fullPath, {

|

||||

respectGitIgnore: options.fileFilteringOptions?.respectGitIgnore,

|

||||

respectQwenIgnore: options.fileFilteringOptions?.respectQwenIgnore,

|

||||

})

|

||||

) {

|

||||

continue;

|

||||

}

|

||||

|

||||

if (isDirectory) {

|

||||

queue.push(fullPath);