mirror of

https://github.com/QwenLM/qwen-code.git

synced 2026-01-14 04:49:14 +00:00

Compare commits

5 Commits

release/v0

...

feat/suppo

| Author | SHA1 | Date | |

|---|---|---|---|

|

|

c4e6c096dc | ||

|

|

4857f2f803 | ||

|

|

5a907c3415 | ||

|

|

d1d215b82e | ||

|

|

a67a8d0277 |

3

.github/CODEOWNERS

vendored

3

.github/CODEOWNERS

vendored

@@ -1,3 +0,0 @@

|

||||

* @tanzhenxin @DennisYu07 @gwinthis @LaZzyMan @pomelo-nwu @Mingholy

|

||||

# SDK TypeScript package changes require review from Mingholy

|

||||

packages/sdk-typescript/** @Mingholy

|

||||

17

.github/workflows/release-sdk.yml

vendored

17

.github/workflows/release-sdk.yml

vendored

@@ -241,7 +241,7 @@ jobs:

|

||||

${{ steps.vars.outputs.is_dry_run == 'false' && steps.vars.outputs.is_nightly == 'false' && steps.vars.outputs.is_preview == 'false' }}

|

||||

id: 'pr'

|

||||

env:

|

||||

GITHUB_TOKEN: '${{ secrets.CI_BOT_PAT }}'

|

||||

GITHUB_TOKEN: '${{ secrets.GITHUB_TOKEN }}'

|

||||

RELEASE_BRANCH: '${{ steps.release_branch.outputs.BRANCH_NAME }}'

|

||||

RELEASE_TAG: '${{ steps.version.outputs.RELEASE_TAG }}'

|

||||

run: |-

|

||||

@@ -258,15 +258,26 @@ jobs:

|

||||

|

||||

echo "PR_URL=${pr_url}" >> "${GITHUB_OUTPUT}"

|

||||

|

||||

- name: 'Wait for CI checks to complete'

|

||||

if: |-

|

||||

${{ steps.vars.outputs.is_dry_run == 'false' && steps.vars.outputs.is_nightly == 'false' && steps.vars.outputs.is_preview == 'false' }}

|

||||

env:

|

||||

GITHUB_TOKEN: '${{ secrets.GITHUB_TOKEN }}'

|

||||

PR_URL: '${{ steps.pr.outputs.PR_URL }}'

|

||||

run: |-

|

||||

set -euo pipefail

|

||||

echo "Waiting for CI checks to complete..."

|

||||

gh pr checks "${PR_URL}" --watch --interval 30

|

||||

|

||||

- name: 'Enable auto-merge for release PR'

|

||||

if: |-

|

||||

${{ steps.vars.outputs.is_dry_run == 'false' && steps.vars.outputs.is_nightly == 'false' && steps.vars.outputs.is_preview == 'false' }}

|

||||

env:

|

||||

GITHUB_TOKEN: '${{ secrets.CI_BOT_PAT }}'

|

||||

GITHUB_TOKEN: '${{ secrets.GITHUB_TOKEN }}'

|

||||

PR_URL: '${{ steps.pr.outputs.PR_URL }}'

|

||||

run: |-

|

||||

set -euo pipefail

|

||||

gh pr merge "${PR_URL}" --merge --auto --delete-branch

|

||||

gh pr merge "${PR_URL}" --merge --auto

|

||||

|

||||

- name: 'Create Issue on Failure'

|

||||

if: |-

|

||||

|

||||

1

.gitignore

vendored

1

.gitignore

vendored

@@ -23,7 +23,6 @@ package-lock.json

|

||||

.idea

|

||||

*.iml

|

||||

.cursor

|

||||

.qoder

|

||||

|

||||

# OS metadata

|

||||

.DS_Store

|

||||

|

||||

7

.vscode/settings.json

vendored

7

.vscode/settings.json

vendored

@@ -13,5 +13,10 @@

|

||||

"[javascript]": {

|

||||

"editor.defaultFormatter": "esbenp.prettier-vscode"

|

||||

},

|

||||

"vitest.disableWorkspaceWarning": true

|

||||

"vitest.disableWorkspaceWarning": true,

|

||||

"lsp": {

|

||||

"enabled": true,

|

||||

"allowed": ["typescript-language-server"],

|

||||

"excluded": ["gopls"]

|

||||

}

|

||||

}

|

||||

|

||||

10

README.md

10

README.md

@@ -25,7 +25,7 @@ Qwen Code is an open-source AI agent for the terminal, optimized for [Qwen3-Code

|

||||

- **OpenAI-compatible, OAuth free tier**: use an OpenAI-compatible API, or sign in with Qwen OAuth to get 2,000 free requests/day.

|

||||

- **Open-source, co-evolving**: both the framework and the Qwen3-Coder model are open-source—and they ship and evolve together.

|

||||

- **Agentic workflow, feature-rich**: rich built-in tools (Skills, SubAgents, Plan Mode) for a full agentic workflow and a Claude Code-like experience.

|

||||

- **Terminal-first, IDE-friendly**: built for developers who live in the command line, with optional integration for VS Code, Zed, and JetBrains IDEs.

|

||||

- **Terminal-first, IDE-friendly**: built for developers who live in the command line, with optional integration for VS Code and Zed.

|

||||

|

||||

## Installation

|

||||

|

||||

@@ -137,11 +137,10 @@ Use `-p` to run Qwen Code without the interactive UI—ideal for scripts, automa

|

||||

|

||||

#### IDE integration

|

||||

|

||||

Use Qwen Code inside your editor (VS Code, Zed, and JetBrains IDEs):

|

||||

Use Qwen Code inside your editor (VS Code and Zed):

|

||||

|

||||

- [Use in VS Code](https://qwenlm.github.io/qwen-code-docs/en/users/integration-vscode/)

|

||||

- [Use in Zed](https://qwenlm.github.io/qwen-code-docs/en/users/integration-zed/)

|

||||

- [Use in JetBrains IDEs](https://qwenlm.github.io/qwen-code-docs/en/users/integration-jetbrains/)

|

||||

|

||||

#### TypeScript SDK

|

||||

|

||||

@@ -201,11 +200,6 @@ If you encounter issues, check the [troubleshooting guide](https://qwenlm.github

|

||||

|

||||

To report a bug from within the CLI, run `/bug` and include a short title and repro steps.

|

||||

|

||||

## Connect with Us

|

||||

|

||||

- Discord: https://discord.gg/ycKBjdNd

|

||||

- Dingtalk: https://qr.dingtalk.com/action/joingroup?code=v1,k1,+FX6Gf/ZDlTahTIRi8AEQhIaBlqykA0j+eBKKdhLeAE=&_dt_no_comment=1&origin=1

|

||||

|

||||

## Acknowledgments

|

||||

|

||||

This project is based on [Google Gemini CLI](https://github.com/google-gemini/gemini-cli). We acknowledge and appreciate the excellent work of the Gemini CLI team. Our main contribution focuses on parser-level adaptations to better support Qwen-Coder models.

|

||||

|

||||

147

cclsp-integration-plan.md

Normal file

147

cclsp-integration-plan.md

Normal file

@@ -0,0 +1,147 @@

|

||||

# Qwen Code CLI LSP 集成实现方案分析

|

||||

|

||||

## 1. 项目概述

|

||||

|

||||

本方案旨在将 LSP(Language Server Protocol)能力原生集成到 Qwen Code CLI 中,使 AI 代理能够利用代码导航、定义查找、引用查找等功能。LSP 将作为与 MCP 并行的一级扩展机制实现。

|

||||

|

||||

## 2. 技术方案对比

|

||||

|

||||

### 2.1 Piebald-AI/claude-code-lsps 方案

|

||||

- **架构**: 客户端直接与每个 LSP 通信,通过 `.lsp.json` 配置文件声明服务器命令/参数、stdio 传输和文件扩展名路由

|

||||

- **用户配置**: 低摩擦,只需放置 `.lsp.json` 配置并确保 LSP 二进制文件已安装

|

||||

- **安全**: LSP 子进程以用户权限运行,无内置信任门控

|

||||

- **功能覆盖**: 可以暴露完整的 LSP 表面(hover、诊断、代码操作、重命名等)

|

||||

|

||||

### 2.2 原生 LSP 客户端方案(推荐方案)

|

||||

- **架构**: Qwen Code CLI 直接作为 LSP 客户端,与语言服务器建立 JSON-RPC 连接

|

||||

- **用户配置**: 支持内置预设 + 用户自定义 `.lsp.json` 配置

|

||||

- **安全**: 与 MCP 共享相同的安全控制(信任工作区、允许/拒绝列表、确认提示)

|

||||

- **功能覆盖**: 暴露完整的 LSP 功能(流式诊断、代码操作、重命名、语义标记等)

|

||||

|

||||

### 2.3 cclsp + MCP 方案(备选)

|

||||

- **架构**: 通过 MCP 协议调用 cclsp 作为 LSP 桥接

|

||||

- **用户配置**: 需要 MCP 配置

|

||||

- **安全**: 通过 MCP 安全控制

|

||||

- **功能覆盖**: 依赖于 cclsp 映射的 MCP 工具

|

||||

|

||||

## 3. 原生 LSP 集成详细计划

|

||||

|

||||

### 3.1 方案选择

|

||||

- **推荐方案**: 原生 LSP 客户端作为主要路径,因为它提供完整 LSP 功能、更低延迟和更好的用户体验

|

||||

- **兼容层**: 保留 cclsp+MCP 作为现有 MCP 工作流的兼容桥接

|

||||

- **并行架构**: LSP 和 MCP 作为独立的扩展机制共存,共享安全策略

|

||||

|

||||

### 3.2 实现步骤

|

||||

|

||||

#### 3.2.1 创建原生 LSP 服务

|

||||

在 `packages/cli/src/services/lsp/` 目录下创建 `NativeLspService` 类,处理:

|

||||

- 工作区语言检测

|

||||

- 自动发现和启动语言服务器

|

||||

- 与现有文档/编辑模型同步

|

||||

- LSP 能力直接暴露给代理

|

||||

|

||||

#### 3.2.2 配置支持

|

||||

- 支持内置预设配置(常见语言服务器)

|

||||

- 支持用户自定义 `.lsp.json` 配置文件

|

||||

- 与 MCP 配置共存,共享信任控制

|

||||

|

||||

#### 3.2.3 集成启动流程

|

||||

- 在 `packages/cli/src/config/config.ts` 中的 `loadCliConfig` 函数内集成

|

||||

- 确保 LSP 服务与 MCP 服务共享相同的安全控制机制

|

||||

- 处理沙箱预检和主运行的重复调用问题

|

||||

|

||||

#### 3.2.4 功能标志配置

|

||||

- 在 `packages/cli/src/config/settingsSchema.ts` 中添加新的设置项

|

||||

- 提供全局开关(如 `lsp.enabled=false`)允许用户禁用 LSP 功能

|

||||

- 尊重 `mcp.allowed`/`mcp.excluded` 和文件夹信任设置

|

||||

|

||||

#### 3.2.5 安全控制

|

||||

- 与 MCP 共享相同的安全控制机制

|

||||

- 在信任工作区中自动启用,在非信任工作区中提示用户

|

||||

- 实现路径允许列表和进程启动确认

|

||||

|

||||

#### 3.2.6 错误处理与用户通知

|

||||

- 检测缺失的语言服务器并提供安装命令

|

||||

- 通过现有 MCP 状态 UI 显示错误信息

|

||||

- 实现重试/退避机制,检测沙箱环境并抑制自动启动

|

||||

|

||||

### 3.3 需要确认的不确定项

|

||||

|

||||

1. **启动集成点**:在 `loadCliConfig` 中集成原生 LSP 服务,需确保与 MCP 服务的协调

|

||||

|

||||

2. **配置优先级**:如果用户已有 cclsp MCP 配置,应保持并存还是优先使用原生 LSP

|

||||

|

||||

3. **功能开关设计**:开关应该是全局级别的,LSP 和 MCP 可独立启用/禁用

|

||||

|

||||

4. **共享安全模型**:如何在代码中复用 MCP 的信任/安全控制逻辑

|

||||

|

||||

5. **语言服务器管理**:如何管理 LSP 服务器生命周期并与文档编辑模型同步

|

||||

|

||||

6. **依赖检测机制**:检测 LSP 服务器可用性,失败时提供降级选项

|

||||

|

||||

7. **测试策略**:需要测试 LSP 与 MCP 的并行运行,以及共享安全控制

|

||||

|

||||

### 3.4 安全考虑

|

||||

|

||||

- 与 MCP 共享相同的安全控制模型

|

||||

- 仅在受信任工作区中启用自动 LSP 功能

|

||||

- 提供用户确认机制用于启动新的 LSP 服务器

|

||||

- 防止路径劫持,使用安全的路径解析

|

||||

|

||||

### 3.5 高级 LSP 功能支持

|

||||

|

||||

- **完整 LSP 功能**: 支持流式诊断、代码操作、重命名、语义高亮、工作区编辑等

|

||||

- **兼容 Claude 配置**: 支持导入 Claude Code 风格的 `.lsp.json` 配置

|

||||

- **性能优化**: 优化 LSP 服务器启动时间和内存使用

|

||||

|

||||

### 3.6 用户体验

|

||||

|

||||

- 提供安装提示而非自动安装

|

||||

- 在统一的状态界面显示 LSP 和 MCP 服务器状态

|

||||

- 提供独立开关让用户控制 LSP 和 MCP 功能

|

||||

- 为只读/沙箱环境提供安全的配置处理和清晰的错误消息

|

||||

|

||||

## 4. 实施总结

|

||||

|

||||

### 4.1 已完成的工作

|

||||

1. **NativeLspService 类**:创建了核心服务类,包含语言检测、配置合并、LSP 连接管理等功能

|

||||

2. **LSP 连接工厂**:实现了基于 stdio 的 LSP 连接创建和管理

|

||||

3. **语言检测机制**:实现了基于文件扩展名和项目配置文件的语言自动检测

|

||||

4. **配置系统**:实现了内置预设、用户配置和 Claude 兼容配置的合并

|

||||

5. **安全控制**:实现了与 MCP 共享的安全控制机制,包括信任检查、用户确认、路径安全验证

|

||||

6. **CLI 集成**:在 `loadCliConfig` 函数中集成了 LSP 服务初始化点

|

||||

|

||||

### 4.2 关键组件

|

||||

|

||||

#### 4.2.1 LspConnectionFactory

|

||||

- 使用 `vscode-jsonrpc` 和 `vscode-languageserver-protocol` 实现 LSP 连接

|

||||

- 支持 stdio 传输方式,可以扩展支持 TCP 传输

|

||||

- 提供连接创建、初始化和关闭的完整生命周期管理

|

||||

|

||||

#### 4.2.2 NativeLspService

|

||||

- **语言检测**:扫描项目文件和配置文件来识别编程语言

|

||||

- **配置合并**:按优先级合并内置预设、用户配置和兼容层配置

|

||||

- **LSP 服务器管理**:启动、停止和状态管理

|

||||

- **安全控制**:与 MCP 共享的信任和确认机制

|

||||

|

||||

#### 4.2.3 配置架构

|

||||

- **内置预设**:为常见语言提供默认 LSP 服务器配置

|

||||

- **用户配置**:支持 `.lsp.json` 文件格式

|

||||

- **Claude 兼容**:可导入 Claude Code 的 LSP 配置

|

||||

|

||||

### 4.3 依赖管理

|

||||

- 使用 `vscode-languageserver-protocol` 进行 LSP 协议通信

|

||||

- 使用 `vscode-jsonrpc` 进行 JSON-RPC 消息传递

|

||||

- 使用 `vscode-languageserver-textdocument` 管理文档版本

|

||||

|

||||

### 4.4 安全特性

|

||||

- 工作区信任检查

|

||||

- 用户确认机制(对于非信任工作区)

|

||||

- 命令存在性验证

|

||||

- 路径安全性检查

|

||||

|

||||

## 5. 总结

|

||||

|

||||

原生 LSP 客户端是当前最符合 Qwen Code 架构的选择,它提供了完整的 LSP 功能、更低的延迟和更好的用户体验。LSP 作为与 MCP 并行的一级扩展机制,将与 MCP 共享安全控制策略,但提供更丰富的代码智能功能。cclsp+MCP 可作为兼容层保留,以支持现有的 MCP 工作流。

|

||||

|

||||

该实现方案将使 Qwen Code CLI 具备完整的 LSP 功能,包括代码跳转、引用查找、自动补全、代码诊断等,为 AI 代理提供更丰富的代码理解能力。

|

||||

@@ -11,7 +11,6 @@ export default {

|

||||

type: 'separator',

|

||||

},

|

||||

'sdk-typescript': 'Typescript SDK',

|

||||

'sdk-java': 'Java SDK(alpha)',

|

||||

'Dive Into Qwen Code': {

|

||||

title: 'Dive Into Qwen Code',

|

||||

type: 'separator',

|

||||

|

||||

@@ -1,312 +0,0 @@

|

||||

# Qwen Code Java SDK

|

||||

|

||||

The Qwen Code Java SDK is a minimum experimental SDK for programmatic access to Qwen Code functionality. It provides a Java interface to interact with the Qwen Code CLI, allowing developers to integrate Qwen Code capabilities into their Java applications.

|

||||

|

||||

## Requirements

|

||||

|

||||

- Java >= 1.8

|

||||

- Maven >= 3.6.0 (for building from source)

|

||||

- qwen-code >= 0.5.0

|

||||

|

||||

### Dependencies

|

||||

|

||||

- **Logging**: ch.qos.logback:logback-classic

|

||||

- **Utilities**: org.apache.commons:commons-lang3

|

||||

- **JSON Processing**: com.alibaba.fastjson2:fastjson2

|

||||

- **Testing**: JUnit 5 (org.junit.jupiter:junit-jupiter)

|

||||

|

||||

## Installation

|

||||

|

||||

Add the following dependency to your Maven `pom.xml`:

|

||||

|

||||

```xml

|

||||

<dependency>

|

||||

<groupId>com.alibaba</groupId>

|

||||

<artifactId>qwencode-sdk</artifactId>

|

||||

<version>{$version}</version>

|

||||

</dependency>

|

||||

```

|

||||

|

||||

Or if using Gradle, add to your `build.gradle`:

|

||||

|

||||

```gradle

|

||||

implementation 'com.alibaba:qwencode-sdk:{$version}'

|

||||

```

|

||||

|

||||

## Building and Running

|

||||

|

||||

### Build Commands

|

||||

|

||||

```bash

|

||||

# Compile the project

|

||||

mvn compile

|

||||

|

||||

# Run tests

|

||||

mvn test

|

||||

|

||||

# Package the JAR

|

||||

mvn package

|

||||

|

||||

# Install to local repository

|

||||

mvn install

|

||||

```

|

||||

|

||||

## Quick Start

|

||||

|

||||

The simplest way to use the SDK is through the `QwenCodeCli.simpleQuery()` method:

|

||||

|

||||

```java

|

||||

public static void runSimpleExample() {

|

||||

List<String> result = QwenCodeCli.simpleQuery("hello world");

|

||||

result.forEach(logger::info);

|

||||

}

|

||||

```

|

||||

|

||||

For more advanced usage with custom transport options:

|

||||

|

||||

```java

|

||||

public static void runTransportOptionsExample() {

|

||||

TransportOptions options = new TransportOptions()

|

||||

.setModel("qwen3-coder-flash")

|

||||

.setPermissionMode(PermissionMode.AUTO_EDIT)

|

||||

.setCwd("./")

|

||||

.setEnv(new HashMap<String, String>() {{put("CUSTOM_VAR", "value");}})

|

||||

.setIncludePartialMessages(true)

|

||||

.setTurnTimeout(new Timeout(120L, TimeUnit.SECONDS))

|

||||

.setMessageTimeout(new Timeout(90L, TimeUnit.SECONDS))

|

||||

.setAllowedTools(Arrays.asList("read_file", "write_file", "list_directory"));

|

||||

|

||||

List<String> result = QwenCodeCli.simpleQuery("who are you, what are your capabilities?", options);

|

||||

result.forEach(logger::info);

|

||||

}

|

||||

```

|

||||

|

||||

For streaming content handling with custom content consumers:

|

||||

|

||||

```java

|

||||

public static void runStreamingExample() {

|

||||

QwenCodeCli.simpleQuery("who are you, what are your capabilities?",

|

||||

new TransportOptions().setMessageTimeout(new Timeout(10L, TimeUnit.SECONDS)), new AssistantContentSimpleConsumers() {

|

||||

|

||||

@Override

|

||||

public void onText(Session session, TextAssistantContent textAssistantContent) {

|

||||

logger.info("Text content received: {}", textAssistantContent.getText());

|

||||

}

|

||||

|

||||

@Override

|

||||

public void onThinking(Session session, ThingkingAssistantContent thingkingAssistantContent) {

|

||||

logger.info("Thinking content received: {}", thingkingAssistantContent.getThinking());

|

||||

}

|

||||

|

||||

@Override

|

||||

public void onToolUse(Session session, ToolUseAssistantContent toolUseContent) {

|

||||

logger.info("Tool use content received: {} with arguments: {}",

|

||||

toolUseContent, toolUseContent.getInput());

|

||||

}

|

||||

|

||||

@Override

|

||||

public void onToolResult(Session session, ToolResultAssistantContent toolResultContent) {

|

||||

logger.info("Tool result content received: {}", toolResultContent.getContent());

|

||||

}

|

||||

|

||||

@Override

|

||||

public void onOtherContent(Session session, AssistantContent<?> other) {

|

||||

logger.info("Other content received: {}", other);

|

||||

}

|

||||

|

||||

@Override

|

||||

public void onUsage(Session session, AssistantUsage assistantUsage) {

|

||||

logger.info("Usage information received: Input tokens: {}, Output tokens: {}",

|

||||

assistantUsage.getUsage().getInputTokens(), assistantUsage.getUsage().getOutputTokens());

|

||||

}

|

||||

}.setDefaultPermissionOperation(Operation.allow));

|

||||

logger.info("Streaming example completed.");

|

||||

}

|

||||

```

|

||||

|

||||

other examples see src/test/java/com/alibaba/qwen/code/cli/example

|

||||

|

||||

## Architecture

|

||||

|

||||

The SDK follows a layered architecture:

|

||||

|

||||

- **API Layer**: Provides the main entry points through `QwenCodeCli` class with simple static methods for basic usage

|

||||

- **Session Layer**: Manages communication sessions with the Qwen Code CLI through the `Session` class

|

||||

- **Transport Layer**: Handles the communication mechanism between the SDK and CLI process (currently using process transport via `ProcessTransport`)

|

||||

- **Protocol Layer**: Defines data structures for communication based on the CLI protocol

|

||||

- **Utils**: Common utilities for concurrent execution, timeout handling, and error management

|

||||

|

||||

## Key Features

|

||||

|

||||

### Permission Modes

|

||||

|

||||

The SDK supports different permission modes for controlling tool execution:

|

||||

|

||||

- **`default`**: Write tools are denied unless approved via `canUseTool` callback or in `allowedTools`. Read-only tools execute without confirmation.

|

||||

- **`plan`**: Blocks all write tools, instructing AI to present a plan first.

|

||||

- **`auto-edit`**: Auto-approve edit tools (edit, write_file) while other tools require confirmation.

|

||||

- **`yolo`**: All tools execute automatically without confirmation.

|

||||

|

||||

### Session Event Consumers and Assistant Content Consumers

|

||||

|

||||

The SDK provides two key interfaces for handling events and content from the CLI:

|

||||

|

||||

#### SessionEventConsumers Interface

|

||||

|

||||

The `SessionEventConsumers` interface provides callbacks for different types of messages during a session:

|

||||

|

||||

- `onSystemMessage`: Handles system messages from the CLI (receives Session and SDKSystemMessage)

|

||||

- `onResultMessage`: Handles result messages from the CLI (receives Session and SDKResultMessage)

|

||||

- `onAssistantMessage`: Handles assistant messages (AI responses) (receives Session and SDKAssistantMessage)

|

||||

- `onPartialAssistantMessage`: Handles partial assistant messages during streaming (receives Session and SDKPartialAssistantMessage)

|

||||

- `onUserMessage`: Handles user messages (receives Session and SDKUserMessage)

|

||||

- `onOtherMessage`: Handles other types of messages (receives Session and String message)

|

||||

- `onControlResponse`: Handles control responses (receives Session and CLIControlResponse)

|

||||

- `onControlRequest`: Handles control requests (receives Session and CLIControlRequest, returns CLIControlResponse)

|

||||

- `onPermissionRequest`: Handles permission requests (receives Session and CLIControlRequest<CLIControlPermissionRequest>, returns Behavior)

|

||||

|

||||

#### AssistantContentConsumers Interface

|

||||

|

||||

The `AssistantContentConsumers` interface handles different types of content within assistant messages:

|

||||

|

||||

- `onText`: Handles text content (receives Session and TextAssistantContent)

|

||||

- `onThinking`: Handles thinking content (receives Session and ThingkingAssistantContent)

|

||||

- `onToolUse`: Handles tool use content (receives Session and ToolUseAssistantContent)

|

||||

- `onToolResult`: Handles tool result content (receives Session and ToolResultAssistantContent)

|

||||

- `onOtherContent`: Handles other content types (receives Session and AssistantContent)

|

||||

- `onUsage`: Handles usage information (receives Session and AssistantUsage)

|

||||

- `onPermissionRequest`: Handles permission requests (receives Session and CLIControlPermissionRequest, returns Behavior)

|

||||

- `onOtherControlRequest`: Handles other control requests (receives Session and ControlRequestPayload, returns ControlResponsePayload)

|

||||

|

||||

#### Relationship Between the Interfaces

|

||||

|

||||

**Important Note on Event Hierarchy:**

|

||||

|

||||

- `SessionEventConsumers` is the **high-level** event processor that handles different message types (system, assistant, user, etc.)

|

||||

- `AssistantContentConsumers` is the **low-level** content processor that handles different types of content within assistant messages (text, tools, thinking, etc.)

|

||||

|

||||

**Processor Relationship:**

|

||||

|

||||

- `SessionEventConsumers` → `AssistantContentConsumers` (SessionEventConsumers uses AssistantContentConsumers to process content within assistant messages)

|

||||

|

||||

**Event Derivation Relationships:**

|

||||

|

||||

- `onAssistantMessage` → `onText`, `onThinking`, `onToolUse`, `onToolResult`, `onOtherContent`, `onUsage`

|

||||

- `onPartialAssistantMessage` → `onText`, `onThinking`, `onToolUse`, `onToolResult`, `onOtherContent`

|

||||

- `onControlRequest` → `onPermissionRequest`, `onOtherControlRequest`

|

||||

|

||||

**Event Timeout Relationships:**

|

||||

|

||||

Each event handler method has a corresponding timeout method that allows customizing the timeout behavior for that specific event:

|

||||

|

||||

- `onSystemMessage` ↔ `onSystemMessageTimeout`

|

||||

- `onResultMessage` ↔ `onResultMessageTimeout`

|

||||

- `onAssistantMessage` ↔ `onAssistantMessageTimeout`

|

||||

- `onPartialAssistantMessage` ↔ `onPartialAssistantMessageTimeout`

|

||||

- `onUserMessage` ↔ `onUserMessageTimeout`

|

||||

- `onOtherMessage` ↔ `onOtherMessageTimeout`

|

||||

- `onControlResponse` ↔ `onControlResponseTimeout`

|

||||

- `onControlRequest` ↔ `onControlRequestTimeout`

|

||||

|

||||

For AssistantContentConsumers timeout methods:

|

||||

|

||||

- `onText` ↔ `onTextTimeout`

|

||||

- `onThinking` ↔ `onThinkingTimeout`

|

||||

- `onToolUse` ↔ `onToolUseTimeout`

|

||||

- `onToolResult` ↔ `onToolResultTimeout`

|

||||

- `onOtherContent` ↔ `onOtherContentTimeout`

|

||||

- `onPermissionRequest` ↔ `onPermissionRequestTimeout`

|

||||

- `onOtherControlRequest` ↔ `onOtherControlRequestTimeout`

|

||||

|

||||

**Default Timeout Values:**

|

||||

|

||||

- `SessionEventSimpleConsumers` default timeout: 180 seconds (Timeout.TIMEOUT_180_SECONDS)

|

||||

- `AssistantContentSimpleConsumers` default timeout: 60 seconds (Timeout.TIMEOUT_60_SECONDS)

|

||||

|

||||

**Timeout Hierarchy Requirements:**

|

||||

|

||||

For proper operation, the following timeout relationships should be maintained:

|

||||

|

||||

- `onAssistantMessageTimeout` return value should be greater than `onTextTimeout`, `onThinkingTimeout`, `onToolUseTimeout`, `onToolResultTimeout`, and `onOtherContentTimeout` return values

|

||||

- `onControlRequestTimeout` return value should be greater than `onPermissionRequestTimeout` and `onOtherControlRequestTimeout` return values

|

||||

|

||||

### Transport Options

|

||||

|

||||

The `TransportOptions` class allows configuration of how the SDK communicates with the Qwen Code CLI:

|

||||

|

||||

- `pathToQwenExecutable`: Path to the Qwen Code CLI executable

|

||||

- `cwd`: Working directory for the CLI process

|

||||

- `model`: AI model to use for the session

|

||||

- `permissionMode`: Permission mode that controls tool execution

|

||||

- `env`: Environment variables to pass to the CLI process

|

||||

- `maxSessionTurns`: Limits the number of conversation turns in a session

|

||||

- `coreTools`: List of core tools that should be available to the AI

|

||||

- `excludeTools`: List of tools to exclude from being available to the AI

|

||||

- `allowedTools`: List of tools that are pre-approved for use without additional confirmation

|

||||

- `authType`: Authentication type to use for the session

|

||||

- `includePartialMessages`: Enables receiving partial messages during streaming responses

|

||||

- `skillsEnable`: Enables or disables skills functionality for the session

|

||||

- `turnTimeout`: Timeout for a complete turn of conversation

|

||||

- `messageTimeout`: Timeout for individual messages within a turn

|

||||

- `resumeSessionId`: ID of a previous session to resume

|

||||

- `otherOptions`: Additional command-line options to pass to the CLI

|

||||

|

||||

### Session Control Features

|

||||

|

||||

- **Session creation**: Use `QwenCodeCli.newSession()` to create a new session with custom options

|

||||

- **Session management**: The `Session` class provides methods to send prompts, handle responses, and manage session state

|

||||

- **Session cleanup**: Always close sessions using `session.close()` to properly terminate the CLI process

|

||||

- **Session resumption**: Use `setResumeSessionId()` in `TransportOptions` to resume a previous session

|

||||

- **Session interruption**: Use `session.interrupt()` to interrupt a currently running prompt

|

||||

- **Dynamic model switching**: Use `session.setModel()` to change the model during a session

|

||||

- **Dynamic permission mode switching**: Use `session.setPermissionMode()` to change the permission mode during a session

|

||||

|

||||

### Thread Pool Configuration

|

||||

|

||||

The SDK uses a thread pool for managing concurrent operations with the following default configuration:

|

||||

|

||||

- **Core Pool Size**: 30 threads

|

||||

- **Maximum Pool Size**: 100 threads

|

||||

- **Keep-Alive Time**: 60 seconds

|

||||

- **Queue Capacity**: 300 tasks (using LinkedBlockingQueue)

|

||||

- **Thread Naming**: "qwen_code_cli-pool-{number}"

|

||||

- **Daemon Threads**: false

|

||||

- **Rejected Execution Handler**: CallerRunsPolicy

|

||||

|

||||

## Error Handling

|

||||

|

||||

The SDK provides specific exception types for different error scenarios:

|

||||

|

||||

- `SessionControlException`: Thrown when there's an issue with session control (creation, initialization, etc.)

|

||||

- `SessionSendPromptException`: Thrown when there's an issue sending a prompt or receiving a response

|

||||

- `SessionClosedException`: Thrown when attempting to use a closed session

|

||||

|

||||

## FAQ / Troubleshooting

|

||||

|

||||

### Q: Do I need to install the Qwen CLI separately?

|

||||

|

||||

A: yes, requires Qwen CLI 0.5.5 or higher.

|

||||

|

||||

### Q: What Java versions are supported?

|

||||

|

||||

A: The SDK requires Java 1.8 or higher.

|

||||

|

||||

### Q: How do I handle long-running requests?

|

||||

|

||||

A: The SDK includes timeout utilities. You can configure timeouts using the `Timeout` class in `TransportOptions`.

|

||||

|

||||

### Q: Why are some tools not executing?

|

||||

|

||||

A: This is likely due to permission modes. Check your permission mode settings and consider using `allowedTools` to pre-approve certain tools.

|

||||

|

||||

### Q: How do I resume a previous session?

|

||||

|

||||

A: Use the `setResumeSessionId()` method in `TransportOptions` to resume a previous session.

|

||||

|

||||

### Q: Can I customize the environment for the CLI process?

|

||||

|

||||

A: Yes, use the `setEnv()` method in `TransportOptions` to pass environment variables to the CLI process.

|

||||

|

||||

## License

|

||||

|

||||

Apache-2.0 - see [LICENSE](./LICENSE) for details.

|

||||

@@ -10,5 +10,4 @@ export default {

|

||||

'web-search': 'Web Search',

|

||||

memory: 'Memory',

|

||||

'mcp-server': 'MCP Servers',

|

||||

sandbox: 'Sandboxing',

|

||||

};

|

||||

|

||||

@@ -1,90 +0,0 @@

|

||||

## Customizing the sandbox environment (Docker/Podman)

|

||||

|

||||

### Currently, the project does not support the use of the BUILD_SANDBOX function after installation through the npm package

|

||||

|

||||

1. To build a custom sandbox, you need to access the build scripts (scripts/build_sandbox.js) in the source code repository.

|

||||

2. These build scripts are not included in the packages released by npm.

|

||||

3. The code contains hard-coded path checks that explicitly reject build requests from non-source code environments.

|

||||

|

||||

If you need extra tools inside the container (e.g., `git`, `python`, `rg`), create a custom Dockerfile, The specific operation is as follows

|

||||

|

||||

#### 1、Clone qwen code project first, https://github.com/QwenLM/qwen-code.git

|

||||

|

||||

#### 2、Make sure you perform the following operation in the source code repository directory

|

||||

|

||||

```bash

|

||||

# 1. First, install the dependencies of the project

|

||||

npm install

|

||||

|

||||

# 2. Build the Qwen Code project

|

||||

npm run build

|

||||

|

||||

# 3. Verify that the dist directory has been generated

|

||||

ls -la packages/cli/dist/

|

||||

|

||||

# 4. Create a global link in the CLI package directory

|

||||

cd packages/cli

|

||||

npm link

|

||||

|

||||

# 5. Verification link (it should now point to the source code)

|

||||

which qwen

|

||||

# Expected output: /xxx/xxx/.nvm/versions/node/v24.11.1/bin/qwen

|

||||

# Or similar paths, but it should be a symbolic link

|

||||

|

||||

# 6. For details of the symbolic link, you can see the specific source code path

|

||||

ls -la $(dirname $(which qwen))/../lib/node_modules/@qwen-code/qwen-code

|

||||

# It should show that this is a symbolic link pointing to your source code directory

|

||||

|

||||

# 7.Test the version of qwen

|

||||

qwen -v

|

||||

# npm link will overwrite the global qwen. To avoid being unable to distinguish the same version number, you can uninstall the global CLI first

|

||||

```

|

||||

|

||||

#### 3、Create your sandbox Dockerfile under the root directory of your own project

|

||||

|

||||

- Path: `.qwen/sandbox.Dockerfile`

|

||||

|

||||

- Official mirror image address:https://github.com/QwenLM/qwen-code/pkgs/container/qwen-code

|

||||

|

||||

```bash

|

||||

# Based on the official Qwen sandbox image (It is recommended to explicitly specify the version)

|

||||

FROM ghcr.io/qwenlm/qwen-code:sha-570ec43

|

||||

# Add your extra tools here

|

||||

RUN apt-get update && apt-get install -y \

|

||||

git \

|

||||

python3 \

|

||||

ripgrep

|

||||

```

|

||||

|

||||

#### 4、Create the first sandbox image under the root directory of your project

|

||||

|

||||

```bash

|

||||

GEMINI_SANDBOX=docker BUILD_SANDBOX=1 qwen -s

|

||||

# Observe whether the sandbox version of the tool you launched is consistent with the version of your custom image. If they are consistent, the startup will be successful

|

||||

```

|

||||

|

||||

This builds a project-specific image based on the default sandbox image.

|

||||

|

||||

#### Remove npm link

|

||||

|

||||

- If you want to restore the official CLI of qwen, please remove the npm link

|

||||

|

||||

```bash

|

||||

# Method 1: Unlink globally

|

||||

npm unlink -g @qwen-code/qwen-code

|

||||

|

||||

# Method 2: Remove it in the packages/cli directory

|

||||

cd packages/cli

|

||||

npm unlink

|

||||

|

||||

# Verification has been lifted

|

||||

which qwen

|

||||

# It should display "qwen not found"

|

||||

|

||||

# Reinstall the global version if necessary

|

||||

npm install -g @qwen-code/qwen-code

|

||||

|

||||

# Verification Recovery

|

||||

which qwen

|

||||

qwen --version

|

||||

```

|

||||

@@ -12,7 +12,6 @@ export default {

|

||||

},

|

||||

'integration-vscode': 'Visual Studio Code',

|

||||

'integration-zed': 'Zed IDE',

|

||||

'integration-jetbrains': 'JetBrains IDEs',

|

||||

'integration-github-action': 'Github Actions',

|

||||

'Code with Qwen Code': {

|

||||

type: 'separator',

|

||||

|

||||

@@ -104,7 +104,7 @@ Settings are organized into categories. All settings should be placed within the

|

||||

| `model.name` | string | The Qwen model to use for conversations. | `undefined` |

|

||||

| `model.maxSessionTurns` | number | Maximum number of user/model/tool turns to keep in a session. -1 means unlimited. | `-1` |

|

||||

| `model.summarizeToolOutput` | object | Enables or disables the summarization of tool output. You can specify the token budget for the summarization using the `tokenBudget` setting. Note: Currently only the `run_shell_command` tool is supported. For example `{"run_shell_command": {"tokenBudget": 2000}}` | `undefined` |

|

||||

| `model.generationConfig` | object | Advanced overrides passed to the underlying content generator. Supports request controls such as `timeout`, `maxRetries`, `disableCacheControl`, and `customHeaders` (custom HTTP headers for API requests), along with fine-tuning knobs under `samplingParams` (for example `temperature`, `top_p`, `max_tokens`). Leave unset to rely on provider defaults. | `undefined` |

|

||||

| `model.generationConfig` | object | Advanced overrides passed to the underlying content generator. Supports request controls such as `timeout`, `maxRetries`, and `disableCacheControl`, along with fine-tuning knobs under `samplingParams` (for example `temperature`, `top_p`, `max_tokens`). Leave unset to rely on provider defaults. | `undefined` |

|

||||

| `model.chatCompression.contextPercentageThreshold` | number | Sets the threshold for chat history compression as a percentage of the model's total token limit. This is a value between 0 and 1 that applies to both automatic compression and the manual `/compress` command. For example, a value of `0.6` will trigger compression when the chat history exceeds 60% of the token limit. Use `0` to disable compression entirely. | `0.7` |

|

||||

| `model.skipNextSpeakerCheck` | boolean | Skip the next speaker check. | `false` |

|

||||

| `model.skipLoopDetection` | boolean | Disables loop detection checks. Loop detection prevents infinite loops in AI responses but can generate false positives that interrupt legitimate workflows. Enable this option if you experience frequent false positive loop detection interruptions. | `false` |

|

||||

@@ -114,16 +114,12 @@ Settings are organized into categories. All settings should be placed within the

|

||||

|

||||

**Example model.generationConfig:**

|

||||

|

||||

```json

|

||||

```

|

||||

{

|

||||

"model": {

|

||||

"generationConfig": {

|

||||

"timeout": 60000,

|

||||

"disableCacheControl": false,

|

||||

"customHeaders": {

|

||||

"X-Request-ID": "req-123",

|

||||

"X-User-ID": "user-456"

|

||||

},

|

||||

"samplingParams": {

|

||||

"temperature": 0.2,

|

||||

"top_p": 0.8,

|

||||

@@ -134,107 +130,12 @@ Settings are organized into categories. All settings should be placed within the

|

||||

}

|

||||

```

|

||||

|

||||

The `customHeaders` field allows you to add custom HTTP headers to all API requests. This is useful for request tracing, monitoring, API gateway routing, or when different models require different headers. If `customHeaders` is defined in `modelProviders[].generationConfig.customHeaders`, it will be used directly; otherwise, headers from `model.generationConfig.customHeaders` will be used. No merging occurs between the two levels.

|

||||

|

||||

**model.openAILoggingDir examples:**

|

||||

|

||||

- `"~/qwen-logs"` - Logs to `~/qwen-logs` directory

|

||||

- `"./custom-logs"` - Logs to `./custom-logs` relative to current directory

|

||||

- `"/tmp/openai-logs"` - Logs to absolute path `/tmp/openai-logs`

|

||||

|

||||

#### modelProviders

|

||||

|

||||

Use `modelProviders` to declare curated model lists per auth type that the `/model` picker can switch between. Keys must be valid auth types (`openai`, `anthropic`, `gemini`, `vertex-ai`, etc.). Each entry requires an `id` and **must include `envKey`**, with optional `name`, `description`, `baseUrl`, and `generationConfig`. Credentials are never persisted in settings; the runtime reads them from `process.env[envKey]`. Qwen OAuth models remain hard-coded and cannot be overridden.

|

||||

|

||||

##### Example

|

||||

|

||||

```json

|

||||

{

|

||||

"modelProviders": {

|

||||

"openai": [

|

||||

{

|

||||

"id": "gpt-4o",

|

||||

"name": "GPT-4o",

|

||||

"envKey": "OPENAI_API_KEY",

|

||||

"baseUrl": "https://api.openai.com/v1",

|

||||

"generationConfig": {

|

||||

"timeout": 60000,

|

||||

"maxRetries": 3,

|

||||

"customHeaders": {

|

||||

"X-Model-Version": "v1.0",

|

||||

"X-Request-Priority": "high"

|

||||

},

|

||||

"samplingParams": { "temperature": 0.2 }

|

||||

}

|

||||

}

|

||||

],

|

||||

"anthropic": [

|

||||

{

|

||||

"id": "claude-3-5-sonnet",

|

||||

"envKey": "ANTHROPIC_API_KEY",

|

||||

"baseUrl": "https://api.anthropic.com/v1"

|

||||

}

|

||||

],

|

||||

"gemini": [

|

||||

{

|

||||

"id": "gemini-2.0-flash",

|

||||

"name": "Gemini 2.0 Flash",

|

||||

"envKey": "GEMINI_API_KEY",

|

||||

"baseUrl": "https://generativelanguage.googleapis.com"

|

||||

}

|

||||

],

|

||||

"vertex-ai": [

|

||||

{

|

||||

"id": "gemini-1.5-pro-vertex",

|

||||

"envKey": "GOOGLE_API_KEY",

|

||||

"baseUrl": "https://generativelanguage.googleapis.com"

|

||||

}

|

||||

]

|

||||

}

|

||||

}

|

||||

```

|

||||

|

||||

> [!note]

|

||||

> Only the `/model` command exposes non-default auth types. Anthropic, Gemini, Vertex AI, etc., must be defined via `modelProviders`. The `/auth` command intentionally lists only the built-in Qwen OAuth and OpenAI flows.

|

||||

|

||||

##### Resolution layers and atomicity

|

||||

|

||||

The effective auth/model/credential values are chosen per field using the following precedence (first present wins). You can combine `--auth-type` with `--model` to point directly at a provider entry; these CLI flags run before other layers.

|

||||

|

||||

| Layer (highest → lowest) | authType | model | apiKey | baseUrl | apiKeyEnvKey | proxy |

|

||||

| -------------------------- | ----------------------------------- | ----------------------------------------------- | --------------------------------------------------- | ---------------------------------------------------- | ---------------------- | --------------------------------- |

|

||||

| Programmatic overrides | `/auth ` | `/auth` input | `/auth` input | `/auth` input | — | — |

|

||||

| Model provider selection | — | `modelProvider.id` | `env[modelProvider.envKey]` | `modelProvider.baseUrl` | `modelProvider.envKey` | — |

|

||||

| CLI arguments | `--auth-type` | `--model` | `--openaiApiKey` (or provider-specific equivalents) | `--openaiBaseUrl` (or provider-specific equivalents) | — | — |

|

||||

| Environment variables | — | Provider-specific mapping (e.g. `OPENAI_MODEL`) | Provider-specific mapping (e.g. `OPENAI_API_KEY`) | Provider-specific mapping (e.g. `OPENAI_BASE_URL`) | — | — |

|

||||

| Settings (`settings.json`) | `security.auth.selectedType` | `model.name` | `security.auth.apiKey` | `security.auth.baseUrl` | — | — |

|

||||

| Default / computed | Falls back to `AuthType.QWEN_OAUTH` | Built-in default (OpenAI ⇒ `qwen3-coder-plus`) | — | — | — | `Config.getProxy()` if configured |

|

||||

|

||||

\*When present, CLI auth flags override settings. Otherwise, `security.auth.selectedType` or the implicit default determine the auth type. Qwen OAuth and OpenAI are the only auth types surfaced without extra configuration.

|

||||

|

||||

Model-provider sourced values are applied atomically: once a provider model is active, every field it defines is protected from lower layers until you manually clear credentials via `/auth`. The final `generationConfig` is the projection across all layers—lower layers only fill gaps left by higher ones, and the provider layer remains impenetrable.

|

||||

|

||||

The merge strategy for `modelProviders` is REPLACE: the entire `modelProviders` from project settings will override the corresponding section in user settings, rather than merging the two.

|

||||

|

||||

##### Generation config layering

|

||||

|

||||

Per-field precedence for `generationConfig`:

|

||||

|

||||

1. Programmatic overrides (e.g. runtime `/model`, `/auth` changes)

|

||||

2. `modelProviders[authType][].generationConfig`

|

||||

3. `settings.model.generationConfig`

|

||||

4. Content-generator defaults (`getDefaultGenerationConfig` for OpenAI, `getParameterValue` for Gemini, etc.)

|

||||

|

||||

`samplingParams` and `customHeaders` are both treated atomically; provider values replace the entire object. If `modelProviders[].generationConfig` defines these fields, they are used directly; otherwise, values from `model.generationConfig` are used. No merging occurs between provider and global configuration levels. Defaults from the content generator apply last so each provider retains its tuned baseline.

|

||||

|

||||

##### Selection persistence and recommendations

|

||||

|

||||

> [!important]

|

||||

> Define `modelProviders` in the user-scope `~/.qwen/settings.json` whenever possible and avoid persisting credential overrides in any scope. Keeping the provider catalog in user settings prevents merge/override conflicts between project and user scopes and ensures `/auth` and `/model` updates always write back to a consistent scope.

|

||||

|

||||

- `/model` and `/auth` persist `model.name` (where applicable) and `security.auth.selectedType` to the closest writable scope that already defines `modelProviders`; otherwise they fall back to the user scope. This keeps workspace/user files in sync with the active provider catalog.

|

||||

- Without `modelProviders`, the resolver mixes CLI/env/settings layers, which is fine for single-provider setups but cumbersome when frequently switching. Define provider catalogs whenever multi-model workflows are common so that switches stay atomic, source-attributed, and debuggable.

|

||||

|

||||

#### context

|

||||

|

||||

| Setting | Type | Description | Default |

|

||||

@@ -480,7 +381,7 @@ Arguments passed directly when running the CLI can override other configurations

|

||||

| `--telemetry-otlp-protocol` | | Sets the OTLP protocol for telemetry (`grpc` or `http`). | | Defaults to `grpc`. See [telemetry](../../developers/development/telemetry) for more information. |

|

||||

| `--telemetry-log-prompts` | | Enables logging of prompts for telemetry. | | See [telemetry](../../developers/development/telemetry) for more information. |

|

||||

| `--checkpointing` | | Enables [checkpointing](../features/checkpointing). | | |

|

||||

| `--acp` | | Enables ACP mode (Agent Client Protocol). Useful for IDE/editor integrations like [Zed](../integration-zed). | | Stable. Replaces the deprecated `--experimental-acp` flag. |

|

||||

| `--experimental-acp` | | Enables ACP mode (Agent Control Protocol). Useful for IDE/editor integrations like [Zed](../integration-zed). | | Experimental. |

|

||||

| `--experimental-skills` | | Enables experimental [Agent Skills](../features/skills) (registers the `skill` tool and loads Skills from `.qwen/skills/` and `~/.qwen/skills/`). | | Experimental. |

|

||||

| `--extensions` | `-e` | Specifies a list of extensions to use for the session. | Extension names | If not provided, all available extensions are used. Use the special term `qwen -e none` to disable all extensions. Example: `qwen -e my-extension -e my-other-extension` |

|

||||

| `--list-extensions` | `-l` | Lists all available extensions and exits. | | |

|

||||

|

||||

@@ -59,7 +59,6 @@ Commands for managing AI tools and models.

|

||||

| ---------------- | --------------------------------------------- | --------------------------------------------- |

|

||||

| `/mcp` | List configured MCP servers and tools | `/mcp`, `/mcp desc` |

|

||||

| `/tools` | Display currently available tool list | `/tools`, `/tools desc` |

|

||||

| `/skills` | List and run available skills (experimental) | `/skills`, `/skills <name>` |

|

||||

| `/approval-mode` | Change approval mode for tool usage | `/approval-mode <mode (auto-edit)> --project` |

|

||||

| →`plan` | Analysis only, no execution | Secure review |

|

||||

| →`default` | Require approval for edits | Daily use |

|

||||

|

||||

@@ -49,8 +49,6 @@ Cross-platform sandboxing with complete process isolation.

|

||||

|

||||

By default, Qwen Code uses a published sandbox image (configured in the CLI package) and will pull it as needed.

|

||||

|

||||

The container sandbox mounts your workspace and your `~/.qwen` directory into the container so auth and settings persist between runs.

|

||||

|

||||

**Best for**: Strong isolation on any OS, consistent tooling inside a known image.

|

||||

|

||||

### Choosing a method

|

||||

@@ -159,13 +157,22 @@ For a working allowlist-style proxy example, see: [Example Proxy Script](/develo

|

||||

|

||||

## Linux UID/GID handling

|

||||

|

||||

On Linux, Qwen Code defaults to enabling UID/GID mapping so the sandbox runs as your user (and reuses the mounted `~/.qwen`). Override with:

|

||||

The sandbox automatically handles user permissions on Linux. Override these permissions with:

|

||||

|

||||

```bash

|

||||

export SANDBOX_SET_UID_GID=true # Force host UID/GID

|

||||

export SANDBOX_SET_UID_GID=false # Disable UID/GID mapping

|

||||

```

|

||||

|

||||

## Customizing the sandbox environment (Docker/Podman)

|

||||

|

||||

If you need extra tools inside the container (e.g., `git`, `python`, `rg`), create a custom Dockerfile:

|

||||

|

||||

- Path: `.qwen/sandbox.Dockerfile`

|

||||

- Then run with: `BUILD_SANDBOX=1 qwen -s ...`

|

||||

|

||||

This builds a project-specific image based on the default sandbox image.

|

||||

|

||||

## Troubleshooting

|

||||

|

||||

### Common issues

|

||||

|

||||

@@ -27,14 +27,6 @@ Agent Skills package expertise into discoverable capabilities. Each Skill consis

|

||||

|

||||

Skills are **model-invoked** — the model autonomously decides when to use them based on your request and the Skill’s description. This is different from slash commands, which are **user-invoked** (you explicitly type `/command`).

|

||||

|

||||

If you want to invoke a Skill explicitly, use the `/skills` slash command:

|

||||

|

||||

```bash

|

||||

/skills <skill-name>

|

||||

```

|

||||

|

||||

The `/skills` command is only available when you run with `--experimental-skills`. Use autocomplete to browse available Skills and descriptions.

|

||||

|

||||

### Benefits

|

||||

|

||||

- Extend Qwen Code for your workflows

|

||||

|

||||

@@ -1,57 +0,0 @@

|

||||

# JetBrains IDEs

|

||||

|

||||

> JetBrains IDEs provide native support for AI coding assistants through the Agent Client Protocol (ACP). This integration allows you to use Qwen Code directly within your JetBrains IDE with real-time code suggestions.

|

||||

|

||||

### Features

|

||||

|

||||

- **Native agent experience**: Integrated AI assistant panel within your JetBrains IDE

|

||||

- **Agent Client Protocol**: Full support for ACP enabling advanced IDE interactions

|

||||

- **Symbol management**: #-mention files to add them to the conversation context

|

||||

- **Conversation history**: Access to past conversations within the IDE

|

||||

|

||||

### Requirements

|

||||

|

||||

- JetBrains IDE with ACP support (IntelliJ IDEA, WebStorm, PyCharm, etc.)

|

||||

- Qwen Code CLI installed

|

||||

|

||||

### Installation

|

||||

|

||||

1. Install Qwen Code CLI:

|

||||

|

||||

```bash

|

||||

npm install -g @qwen-code/qwen-code

|

||||

```

|

||||

|

||||

2. Open your JetBrains IDE and navigate to AI Chat tool window.

|

||||

|

||||

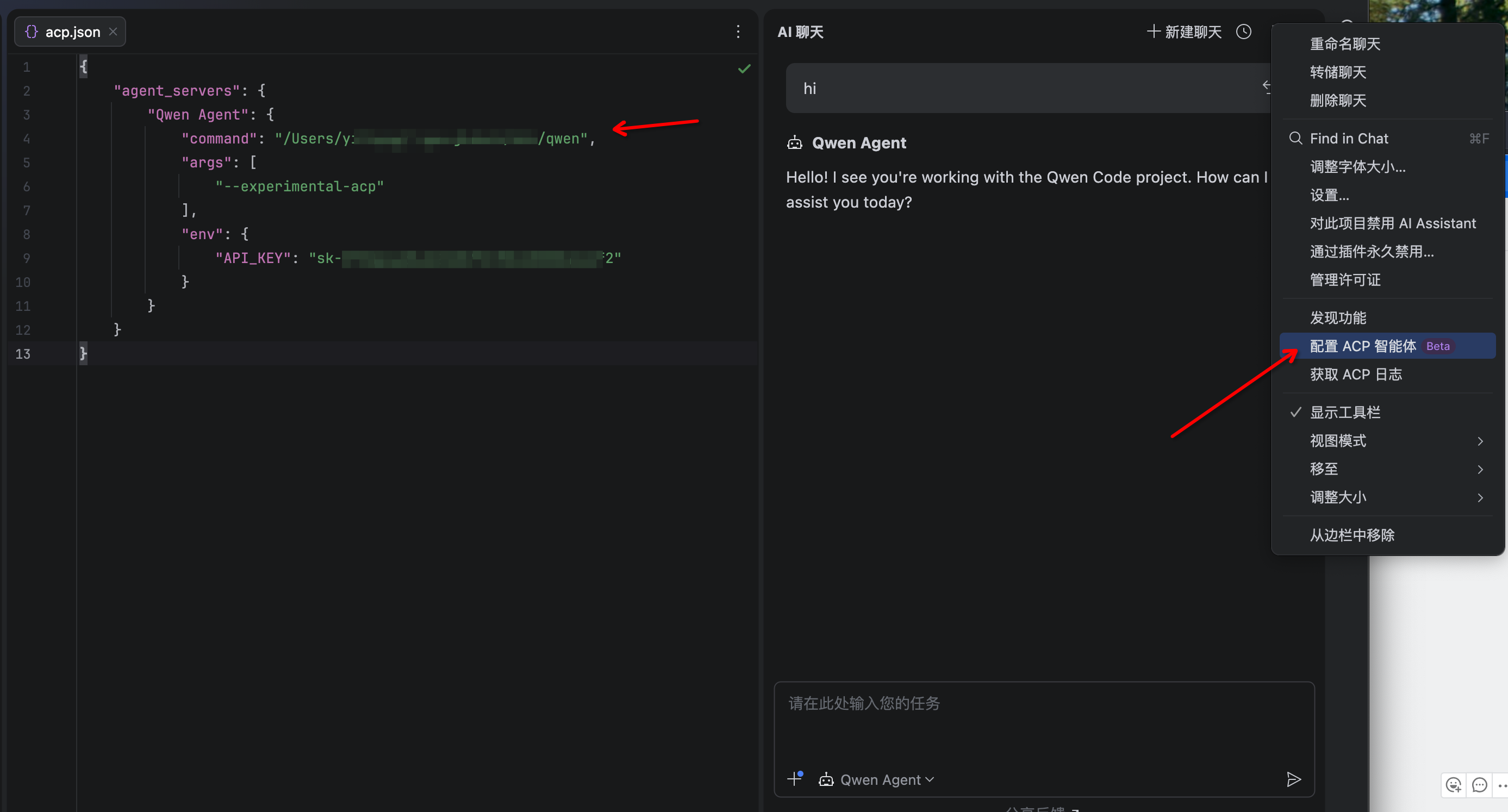

3. Click the 3-dot menu in the upper-right corner and select **Configure ACP Agent** and configure Qwen Code with the following settings:

|

||||

|

||||

```json

|

||||

{

|

||||

"agent_servers": {

|

||||

"qwen": {

|

||||

"command": "/path/to/qwen",

|

||||

"args": ["--acp"],

|

||||

"env": {}

|

||||

}

|

||||

}

|

||||

}

|

||||

```

|

||||

|

||||

4. The Qwen Code agent should now be available in the AI Assistant panel

|

||||

|

||||

|

||||

|

||||

## Troubleshooting

|

||||

|

||||

### Agent not appearing

|

||||

|

||||

- Run `qwen --version` in terminal to verify installation

|

||||

- Ensure your JetBrains IDE version supports ACP

|

||||

- Restart your JetBrains IDE

|

||||

|

||||

### Qwen Code not responding

|

||||

|

||||

- Check your internet connection

|

||||

- Verify CLI works by running `qwen` in terminal

|

||||

- [File an issue on GitHub](https://github.com/qwenlm/qwen-code/issues) if the problem persists

|

||||

@@ -18,7 +18,7 @@

|

||||

|

||||

### Requirements

|

||||

|

||||

- VS Code 1.85.0 or higher

|

||||

- VS Code 1.98.0 or higher

|

||||

|

||||

### Installation

|

||||

|

||||

@@ -34,7 +34,7 @@

|

||||

|

||||

### Extension not installing

|

||||

|

||||

- Ensure you have VS Code 1.85.0 or higher

|

||||

- Ensure you have VS Code 1.98.0 or higher

|

||||

- Check that VS Code has permission to install extensions

|

||||

- Try installing directly from the Marketplace website

|

||||

|

||||

|

||||

@@ -1,6 +1,6 @@

|

||||

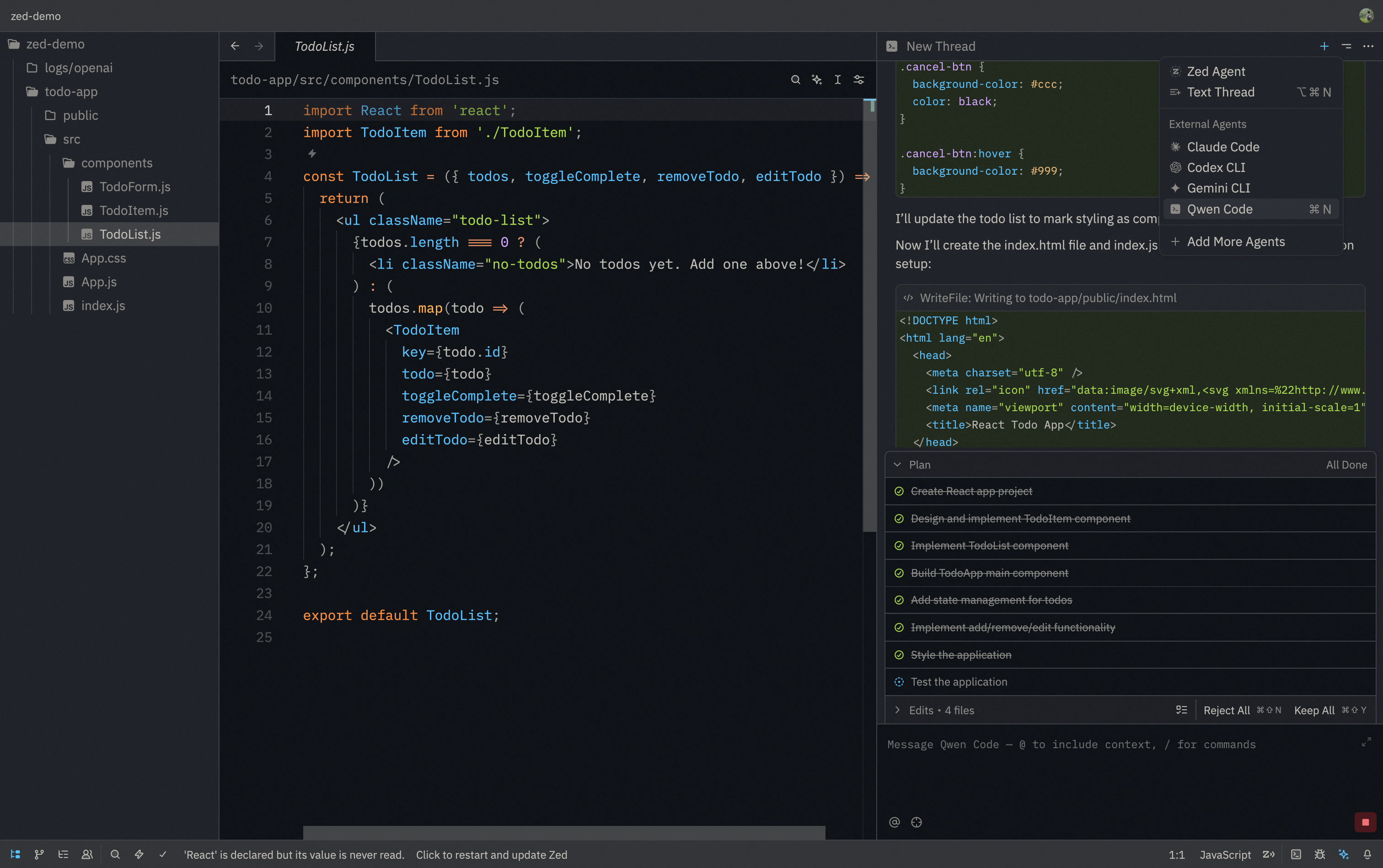

# Zed Editor

|

||||

|

||||

> Zed Editor provides native support for AI coding assistants through the Agent Client Protocol (ACP). This integration allows you to use Qwen Code directly within Zed's interface with real-time code suggestions.

|

||||

> Zed Editor provides native support for AI coding assistants through the Agent Control Protocol (ACP). This integration allows you to use Qwen Code directly within Zed's interface with real-time code suggestions.

|

||||

|

||||

|

||||

|

||||

@@ -32,7 +32,7 @@

|

||||

"Qwen Code": {

|

||||

"type": "custom",

|

||||

"command": "qwen",

|

||||

"args": ["--acp"],

|

||||

"args": ["--experimental-acp"],

|

||||

"env": {}

|

||||

}

|

||||

```

|

||||

|

||||

@@ -1,6 +1,5 @@

|

||||

# Qwen Code overview

|

||||

|

||||

[](https://npm-compare.com/@qwen-code/qwen-code)

|

||||

[](https://npm-compare.com/@qwen-code/qwen-code)

|

||||

[](https://www.npmjs.com/package/@qwen-code/qwen-code)

|

||||

|

||||

> Learn about Qwen Code, Qwen's agentic coding tool that lives in your terminal and helps you turn ideas into code faster than ever before.

|

||||

|

||||

@@ -159,7 +159,7 @@ Qwen Code will:

|

||||

|

||||

### Test out other common workflows

|

||||

|

||||

There are a number of ways to work with Qwen Code:

|

||||

There are a number of ways to work with Claude:

|

||||

|

||||

**Refactor code**

|

||||

|

||||

|

||||

@@ -9,18 +9,11 @@ This guide provides solutions to common issues and debugging tips, including top

|

||||

|

||||

## Authentication or login errors

|

||||

|

||||

- **Error: `UNABLE_TO_GET_ISSUER_CERT_LOCALLY`, `UNABLE_TO_VERIFY_LEAF_SIGNATURE`, or `unable to get local issuer certificate`**

|

||||

- **Error: `UNABLE_TO_GET_ISSUER_CERT_LOCALLY` or `unable to get local issuer certificate`**

|

||||

- **Cause:** You may be on a corporate network with a firewall that intercepts and inspects SSL/TLS traffic. This often requires a custom root CA certificate to be trusted by Node.js.

|

||||

- **Solution:** Set the `NODE_EXTRA_CA_CERTS` environment variable to the absolute path of your corporate root CA certificate file.

|

||||

- Example: `export NODE_EXTRA_CA_CERTS=/path/to/your/corporate-ca.crt`

|

||||

|

||||

- **Error: `Device authorization flow failed: fetch failed`**

|

||||

- **Cause:** Node.js could not reach Qwen OAuth endpoints (often a proxy or SSL/TLS trust issue). When available, Qwen Code will also print the underlying error cause (for example: `UNABLE_TO_VERIFY_LEAF_SIGNATURE`).

|

||||

- **Solution:**

|

||||

- Confirm you can access `https://chat.qwen.ai` from the same machine/network.

|

||||

- If you are behind a proxy, set it via `qwen --proxy <url>` (or the `proxy` setting in `settings.json`).

|

||||

- If your network uses a corporate TLS inspection CA, set `NODE_EXTRA_CA_CERTS` as described above.

|

||||

|

||||

- **Issue: Unable to display UI after authentication failure**

|

||||

- **Cause:** If authentication fails after selecting an authentication type, the `security.auth.selectedType` setting may be persisted in `settings.json`. On restart, the CLI may get stuck trying to authenticate with the failed auth type and fail to display the UI.

|

||||

- **Solution:** Clear the `security.auth.selectedType` configuration item in your `settings.json` file:

|

||||

|

||||

@@ -80,11 +80,10 @@ type PermissionHandler = (

|

||||

|

||||

/**

|

||||

* Sets up an ACP test environment with all necessary utilities.

|

||||

* @param useNewFlag - If true, uses --acp; if false, uses --experimental-acp (for backward compatibility testing)

|

||||

*/

|

||||

function setupAcpTest(

|

||||

rig: TestRig,

|

||||

options?: { permissionHandler?: PermissionHandler; useNewFlag?: boolean },

|

||||

options?: { permissionHandler?: PermissionHandler },

|

||||

) {

|

||||

const pending = new Map<number, PendingRequest>();

|

||||

let nextRequestId = 1;

|

||||

@@ -96,13 +95,9 @@ function setupAcpTest(

|

||||

const permissionHandler =

|

||||

options?.permissionHandler ?? (() => ({ optionId: 'proceed_once' }));

|

||||

|

||||

// Use --acp by default, but allow testing with --experimental-acp for backward compatibility

|

||||

const acpFlag =

|

||||

options?.useNewFlag !== false ? '--acp' : '--experimental-acp';

|

||||

|

||||

const agent = spawn(

|

||||

'node',

|

||||

[rig.bundlePath, acpFlag, '--no-chat-recording'],

|

||||

[rig.bundlePath, '--experimental-acp', '--no-chat-recording'],

|

||||

{

|

||||

cwd: rig.testDir!,

|

||||

stdio: ['pipe', 'pipe', 'pipe'],

|

||||

@@ -626,99 +621,3 @@ function setupAcpTest(

|

||||

}

|

||||

});

|

||||

});

|

||||

|

||||

(IS_SANDBOX ? describe.skip : describe)(

|

||||

'acp flag backward compatibility',

|

||||

() => {

|

||||

it('should work with deprecated --experimental-acp flag and show warning', async () => {

|

||||

const rig = new TestRig();

|

||||

rig.setup('acp backward compatibility');

|

||||

|

||||

const { sendRequest, cleanup, stderr } = setupAcpTest(rig, {

|

||||

useNewFlag: false,

|

||||

});

|

||||

|

||||

try {

|

||||

const initResult = await sendRequest('initialize', {

|

||||

protocolVersion: 1,

|

||||

clientCapabilities: {

|

||||

fs: { readTextFile: true, writeTextFile: true },

|

||||

},

|

||||

});

|

||||

expect(initResult).toBeDefined();

|

||||

|

||||

// Verify deprecation warning is shown

|

||||

const stderrOutput = stderr.join('');

|

||||

expect(stderrOutput).toContain('--experimental-acp is deprecated');

|

||||

expect(stderrOutput).toContain('Please use --acp instead');

|

||||

|

||||

await sendRequest('authenticate', { methodId: 'openai' });

|

||||

|

||||

const newSession = (await sendRequest('session/new', {

|

||||

cwd: rig.testDir!,

|

||||

mcpServers: [],

|

||||

})) as { sessionId: string };

|

||||

expect(newSession.sessionId).toBeTruthy();

|

||||

|

||||

// Verify functionality still works

|

||||

const promptResult = await sendRequest('session/prompt', {

|

||||

sessionId: newSession.sessionId,

|

||||

prompt: [{ type: 'text', text: 'Say hello.' }],

|

||||

});

|

||||

expect(promptResult).toBeDefined();

|

||||

} catch (e) {

|

||||

if (stderr.length) {

|

||||

console.error('Agent stderr:', stderr.join(''));

|

||||

}

|

||||

throw e;

|

||||

} finally {

|

||||

await cleanup();

|

||||

}

|

||||

});

|

||||

|

||||

it('should work with new --acp flag without warnings', async () => {

|

||||

const rig = new TestRig();

|

||||

rig.setup('acp new flag');

|

||||

|

||||

const { sendRequest, cleanup, stderr } = setupAcpTest(rig, {

|

||||

useNewFlag: true,

|

||||

});

|

||||

|

||||

try {

|

||||

const initResult = await sendRequest('initialize', {

|

||||

protocolVersion: 1,

|

||||

clientCapabilities: {

|

||||

fs: { readTextFile: true, writeTextFile: true },

|

||||

},

|

||||

});

|

||||

expect(initResult).toBeDefined();

|

||||

|

||||

// Verify no deprecation warning is shown

|

||||

const stderrOutput = stderr.join('');

|

||||

expect(stderrOutput).not.toContain('--experimental-acp is deprecated');

|

||||

|

||||

await sendRequest('authenticate', { methodId: 'openai' });

|

||||

|

||||

const newSession = (await sendRequest('session/new', {

|

||||

cwd: rig.testDir!,

|

||||

mcpServers: [],

|

||||

})) as { sessionId: string };

|

||||

expect(newSession.sessionId).toBeTruthy();

|

||||

|

||||

// Verify functionality works

|

||||

const promptResult = await sendRequest('session/prompt', {

|

||||

sessionId: newSession.sessionId,

|

||||

prompt: [{ type: 'text', text: 'Say hello.' }],

|

||||

});

|

||||

expect(promptResult).toBeDefined();

|

||||

} catch (e) {

|

||||

if (stderr.length) {

|

||||

console.error('Agent stderr:', stderr.join(''));

|

||||

}

|

||||

throw e;

|

||||

} finally {

|

||||

await cleanup();

|

||||

}

|

||||

});

|

||||

},

|

||||

);

|

||||

|

||||

@@ -831,7 +831,7 @@ describe('Permission Control (E2E)', () => {

|

||||

TEST_TIMEOUT,

|

||||

);

|

||||

|

||||

it.skip(

|

||||

it(

|

||||

'should execute dangerous commands without confirmation',

|

||||

async () => {

|

||||

const q = query({

|

||||

|

||||

29

package-lock.json

generated

29

package-lock.json

generated

@@ -1,12 +1,12 @@

|

||||

{

|

||||

"name": "@qwen-code/qwen-code",

|

||||

"version": "0.7.0",

|

||||

"version": "0.6.0",

|

||||

"lockfileVersion": 3,

|

||||

"requires": true,

|

||||

"packages": {

|

||||

"": {

|

||||

"name": "@qwen-code/qwen-code",

|

||||

"version": "0.7.0",

|

||||

"version": "0.6.0",

|

||||

"workspaces": [

|

||||

"packages/*"

|

||||

],

|

||||

@@ -39,6 +39,7 @@

|

||||

"globals": "^16.0.0",

|

||||

"husky": "^9.1.7",

|

||||

"json": "^11.0.0",

|

||||

"json-schema": "^0.4.0",

|

||||

"lint-staged": "^16.1.6",

|

||||

"memfs": "^4.42.0",

|

||||

"mnemonist": "^0.40.3",

|

||||

@@ -6216,7 +6217,10 @@

|

||||

"version": "4.0.3",

|

||||

"resolved": "https://registry.npmjs.org/chokidar/-/chokidar-4.0.3.tgz",

|

||||

"integrity": "sha512-Qgzu8kfBvo+cA4962jnP1KkS6Dop5NS6g7R5LFYJr4b8Ub94PPQXUksCw9PvXoeXPRRddRNC5C1JQUR2SMGtnA==",

|

||||

"dev": true,

|

||||

"license": "MIT",

|

||||

"optional": true,

|

||||

"peer": true,

|

||||

"dependencies": {

|

||||

"readdirp": "^4.0.1"

|

||||

},

|

||||

@@ -10804,6 +10808,13 @@

|

||||

"node": "^18.17.0 || >=20.5.0"

|

||||

}

|

||||

},

|

||||

"node_modules/json-schema": {

|

||||

"version": "0.4.0",

|

||||

"resolved": "https://registry.npmjs.org/json-schema/-/json-schema-0.4.0.tgz",

|

||||

"integrity": "sha512-es94M3nTIfsEPisRafak+HDLfHXnKBhV3vU5eqPcS3flIWqcxJWgXHXiey3YrpaNsanY5ei1VoYEbOzijuq9BA==",

|

||||

"dev": true,

|

||||

"license": "(AFL-2.1 OR BSD-3-Clause)"

|

||||

},

|

||||

"node_modules/json-schema-traverse": {

|

||||

"version": "0.4.1",

|

||||

"resolved": "https://registry.npmjs.org/json-schema-traverse/-/json-schema-traverse-0.4.1.tgz",

|

||||

@@ -13879,7 +13890,10 @@

|

||||

"version": "4.1.2",

|

||||

"resolved": "https://registry.npmjs.org/readdirp/-/readdirp-4.1.2.tgz",

|

||||

"integrity": "sha512-GDhwkLfywWL2s6vEjyhri+eXmfH6j1L7JE27WhqLeYzoh/A3DBaYGEj2H/HFZCn/kMfim73FXxEJTw06WtxQwg==",

|

||||

"dev": true,

|

||||

"license": "MIT",

|

||||

"optional": true,

|

||||

"peer": true,

|

||||

"engines": {

|

||||

"node": ">= 14.18.0"

|

||||

},

|

||||

@@ -17310,7 +17324,7 @@

|

||||

},

|

||||

"packages/cli": {

|

||||

"name": "@qwen-code/qwen-code",

|

||||

"version": "0.7.0",

|

||||

"version": "0.6.0",

|

||||

"dependencies": {

|

||||

"@google/genai": "1.30.0",

|

||||

"@iarna/toml": "^2.2.5",

|

||||

@@ -17947,7 +17961,7 @@

|

||||

},

|

||||

"packages/core": {

|

||||

"name": "@qwen-code/qwen-code-core",

|

||||

"version": "0.7.0",

|

||||

"version": "0.6.0",

|

||||

"hasInstallScript": true,

|

||||

"dependencies": {

|

||||

"@anthropic-ai/sdk": "^0.36.1",

|

||||

@@ -17968,7 +17982,6 @@

|

||||

"ajv-formats": "^3.0.0",

|

||||